Chapter 5: Overview and Interaction Techniques

5.1 Introduction to Constructive Research

Having explored empirical research methods in the previous chapters, we now turn our attention to another fundamental approach in HCI research: constructive research.

Constructive research is unique to Human-Computer Interaction (HCI) research, where researchers create practical solutions that improve how humans interact with technology. Below are some examples of such research:

- Bubble Cursor is a pointing technique that uses a dynamic area cursor for quick acquisition of on-screen targets ( Grossman & Balakrishnan, 2005 )

- earPod introduced a menu widget that uses touch and audio feedback, enabling users to efficiently navigate menus without looking at the screen ( Zhao et al., 2007 )

- Draco offered an application for authoring and controlling complex animations through constraints ( Chen et al., 2018 )

- D3 (Data-Driven Documents) provided a framework that allows developers to create interactive data visualizations for the web ( Bako et al., 2023 )

While all these examples fall under the umbrella of constructive research, they illustrate a clear distinction in both scope and focus. Bubble Cursor and earPod, for example, are interaction techniques designed to solve targeted challenges — such as improving target acquisition or enabling menu navigation with minimal visual attention. On the other hand, Draco and D3 represent more expansive systems or toolkits: Draco offers a comprehensive platform for authoring constraint-based animations, while D3 empowers developers to build a wide variety of web-based data visualizations.

Recognizing these differences is important, as it highlights two primary categories of artifacts within constructive research — Interaction Techniques and Systems/Toolkits. Each category not only addresses different types of problems but also follows its own trajectory in terms of innovation, evaluation, and impact. In the following sections, we will explore how these categories shape the approach and contributions of constructive research in HCI. This chapter will focus on interaction techniques , while systems and toolkits will be covered in the next.

Brief Overview of Interaction Techniques

Interaction techniques are fundamental building blocks in Human-Computer Interaction (HCI) that define specific methods for accomplishing common interaction tasks like pointing, menu selection, panning, and zooming. These techniques specify how users can manipulate digital objects to achieve frequently performed operations. They consist of three key components: (1) input actions performed by the user (e.g., mouse movements, key presses, gestures), (2) system processing and interpretation of those inputs, and (3) output feedback presented to the user (e.g., visual, auditory, or haptic responses). These components work together in a tightly coupled manner to create fluid interaction loops between the user and system.

What distinguishes interaction techniques from other HCI artifacts is their focus on optimizing these commonly used interaction approaches. Since these fundamental operations occur frequently across many applications, improvements to the underlying techniques can benefit any system that adopts them. For example, the Bubble Cursor ( Grossman & Balakrishnan, 2005 ) enhances the basic pointing operation by dynamically resizing the cursor's activation area based on nearby targets, making target acquisition more efficient across any interface that implements it. The technique clearly defines the input (mouse movement), processing (bubble size adjustment algorithm), and output (visual representation of the bubble).

Interaction techniques can range from simple direct manipulation methods to complex gestural interfaces, but they all aim to improve these core interaction building blocks that users rely on constantly. By focusing on enhancing these fundamental operations, interaction techniques serve as reusable components that can be incorporated into various systems to provide users with more efficient and learnable ways to accomplish common tasks.

While these tasks are essential to user interaction, each technique usually focuses on a single, well-defined purpose. Because of this narrow focus, only a limited set of design factors need to be considered. This enables researchers to examine each factor in depth, making it easier to pinpoint how specific design choices lead to improvements or trade-offs in performance. As a result, research on interaction techniques can provide clear evidence of design causality, demonstrating not only that a technique is effective, but also explaining precisely why and how it achieves its benefits.

The focused nature and narrower scope of interaction techniques make it easier to think about their contributions in terms of one of two primary approaches, or "routes." These routes, which we will discuss in the next section, help define the novelty and value of the work, whether it's by creating a completely new capability or by significantly improving an existing one.

5.2 Routes of Constructive Research

Having established the two primary categories of constructive research—interaction techniques and systems/toolkits — it is important to recognize that another key dimension shapes how research in these areas is conducted and evaluated: the research route. In other words, beyond what kind of artifact is being created, researchers must also consider how their work advances the field. The next section introduces these research routes and explains their significance in constructive HCI research.

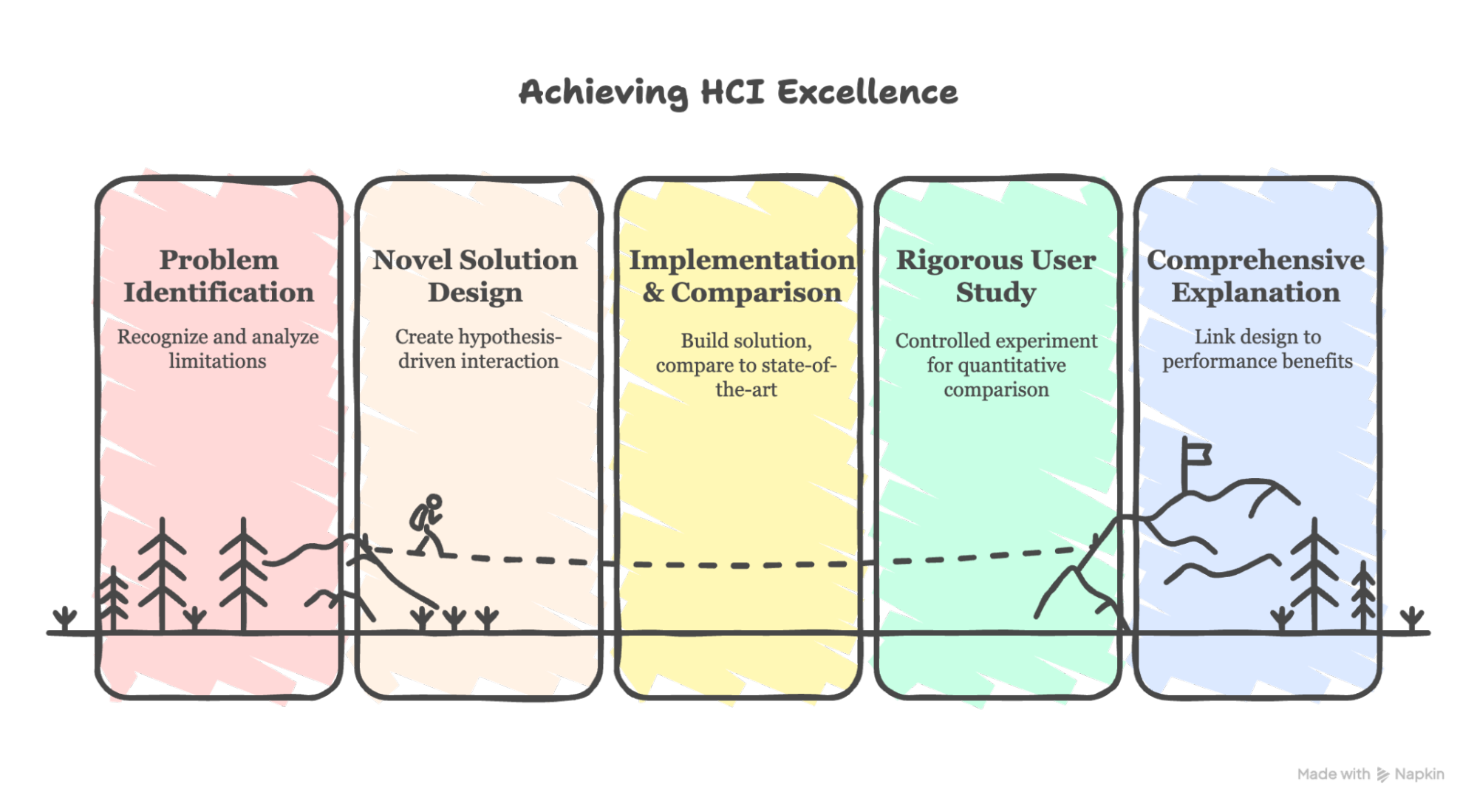

At this point, you might wonder: if techniques, systems, and toolkits are being created all the time — for instance, with countless new apps appearing in app stores every day — what makes a research artifact different from any other new application or tool? This is a crucial question, and as we discussed in earlier chapters (e.g., Chapter 2 ), a core tenet of research, including in HCI, is the creation of new, useful, and generalizable knowledge . In constructive research, this knowledge is not just described abstractly but is embodied and demonstrated through the artifact itself . The artifact serves as tangible proof of a novel concept, an improved solution, or a new capability that extends our understanding or abilities within interactive computing. To establish that an artifact indeed contributes such new knowledge, the work typically needs to demonstrate its value and novelty through one of two primary approaches or "routes": "be the first" or "be the best."

Both routes are ways of demonstrating useful, novel knowledge. "Be the first" prioritizes breaking new ground with innovative ideas, while "be the best" emphasizes refining existing approaches to achieve superior performance.

While this framework can apply universally to constructive research, it is more clearly demonstrated with interaction techniques . Because techniques are narrowly focused, it is relatively straightforward to define what makes one "first" or to measure whether it is "best" against an established baseline. For systems and toolkits , however, the distinction can be more complex. A system is typically a multifaceted integration of many features—some novel, others not. This complexity sometimes makes direct evaluation against a single baseline challenging, as it can be difficult to find an existing artifact that provides a fair point of comparison across all functionalities.

In the discussion below, we will use examples of both interaction techniques and systems/toolkits to illustrate the "be the first" and "be the best" routes. However, it is important to keep in mind that additional considerations are needed for system and toolkit research; these will be discussed in the next chapter.

5.2.1 Be the First - When There is No Known Solution:

"Be the first" route involves pioneering solutions for unexplored problems in interactive computing. While this route emphasizes novelty, it carries an implicit assumption: the new artifact must not only be novel but also demonstrate clear advantages, or new capabilities (See Figure 5.1 for two examples).

Example

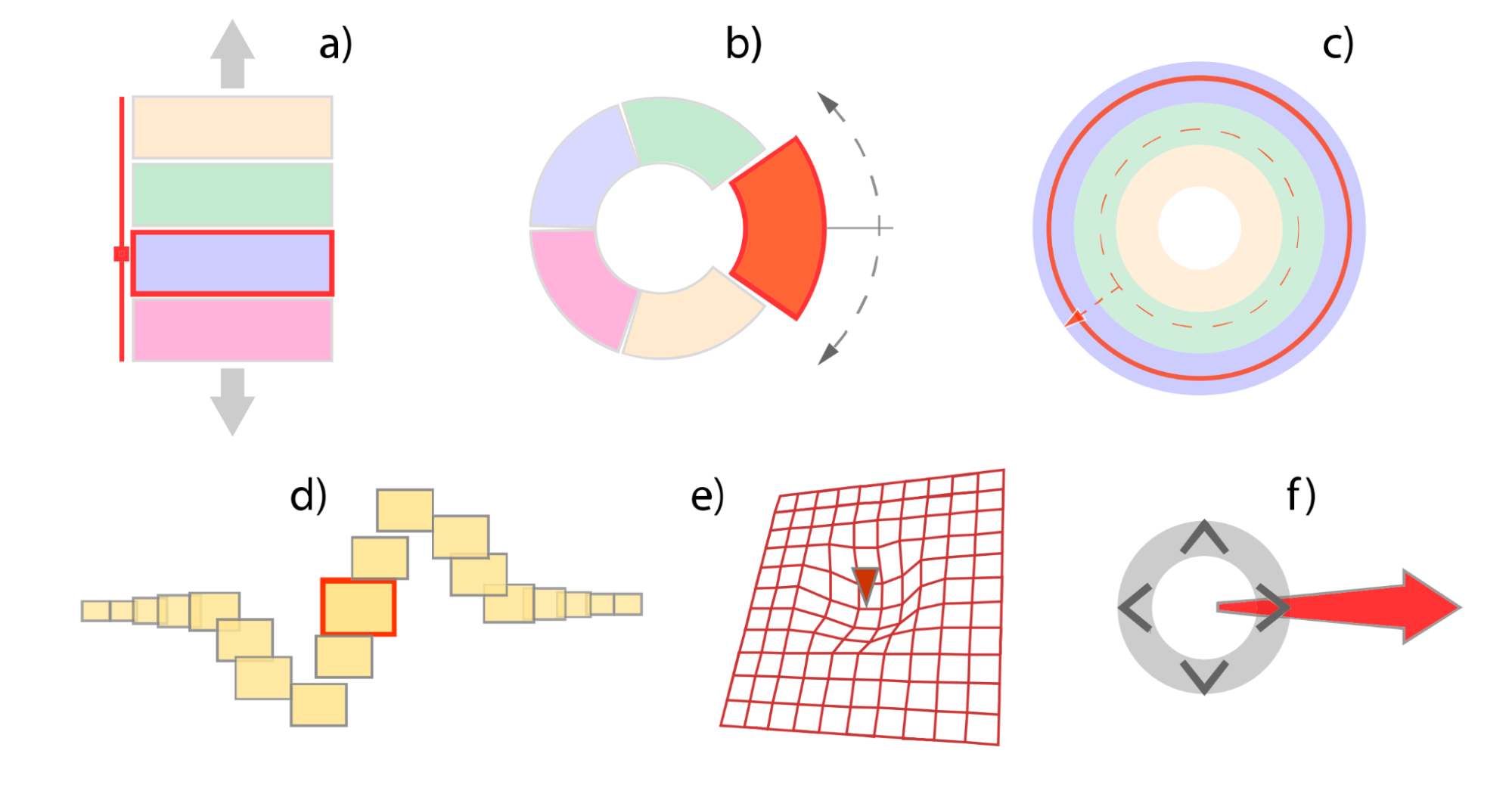

Figure 5.1: Left: Concept designs for pressure widgets, including (a) Flag, (b) Rotating Expanding Pie, (c) Bullseye, (d) TLSlider, (e) Pressure Grid, and (f) Pressure Marking Menu. Right: Illustration from the CrossY interface demonstrating the brush palette (left) and the main palette with a find/replace dialogue box (right).

One example of the "be the first" route is the Pressure Widget ( Ramos et al., 2004 ), which introduced continuous pressure sensing as a novel input for user interfaces. Before this work, most widgets only used x-y position and simple button presses, overlooking the potential of pressure input available on some styluses and touch devices.

The Pressure Widget project explored how varying pressure could enable multi-state widgets, expanding interaction possibilities. Researchers mapped the design space for pressure-based controls and ran experiments to see how well users could select discrete targets by applying different pressure levels, with various feedback and confirmation methods.

Their findings revealed how many pressure levels users could reliably distinguish and which feedback techniques worked best, leading to a taxonomy of pressure-based widgets and their applications. By demonstrating both feasibility and user benefits, the Pressure Widget opened up new ways to design compact and expressive interfaces. Pressure Widget embodies the "be the first" approach by introducing and validating a new input modality, systematically exploring its design, and providing practical insights for using pressure sensitivity in HCI.

Another illustrative example (Figure 5.1 Right ) of the "be the first" route in system artifacts is the CrossY system ( Apitz & Guimbretière, 2004 ). CrossY is a simple drawing application developed to demonstrate the feasibility and expressiveness of "goal crossing" as a fundamental interaction paradigm for graphical user interfaces. Prior to CrossY, the dominant paradigm in graphical interfaces was point-and-click, where users select and manipulate objects by pointing and clicking on them. While the concept of goal crossing — triggering actions by crossing boundaries with a pointer — had been discussed in the literature as a possible alternative, it had not been realized as the core mechanism in a fully functional system.

CrossY breaks new ground by being the first to implement and showcase a complete interface where goal crossing is the primary means of interaction. The system demonstrates that crossing is not only as expressive as point-and-click, but also enables new forms of fluid interaction. For example, CrossY allows users to compose commands in a more seamless and continuous manner, supporting the development of more fluid and flexible interfaces. This practical implementation provides concrete evidence that goal crossing can serve as a viable and even advantageous alternative to traditional interaction techniques.

By introducing and validating this new interaction paradigm within a working system, CrossY embodies the "be the first" approach: it pioneers a novel concept, demonstrates its feasibility through a real artifact, and opens up new possibilities for interface design. The contribution of CrossY lies not only in the technical implementation, but also in the new knowledge it provides to the HCI community about the potential and practicality of goal crossing as a foundational building block for user interfaces.

While "be the first" research charts new territories, much of the progress in HCI comes from building upon and improving existing foundations. Addressing these gaps is the focus of the "be the best" research route.

5.2.2 Be the Best - When There Exists Partial Solution:

The "be the best" route focuses on enhancing existing artifacts by developing techniques that outperform current state-of-the-art solutions in specific scenarios or performance metrics. The goal is to raise the bar by improving capabilities, efficiency, or user experience of established solutions (See Figure 5.2 for two examples).

Example

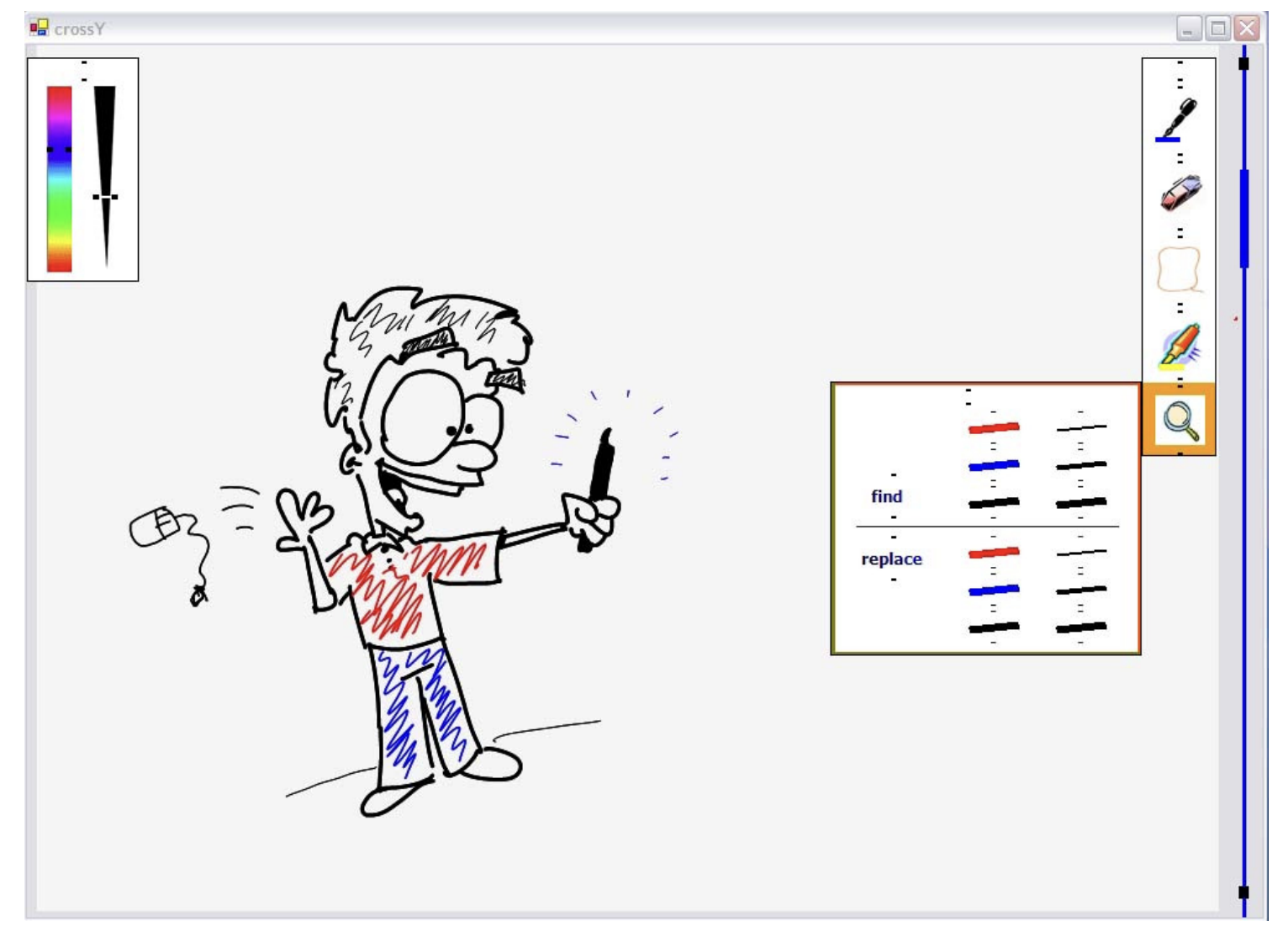

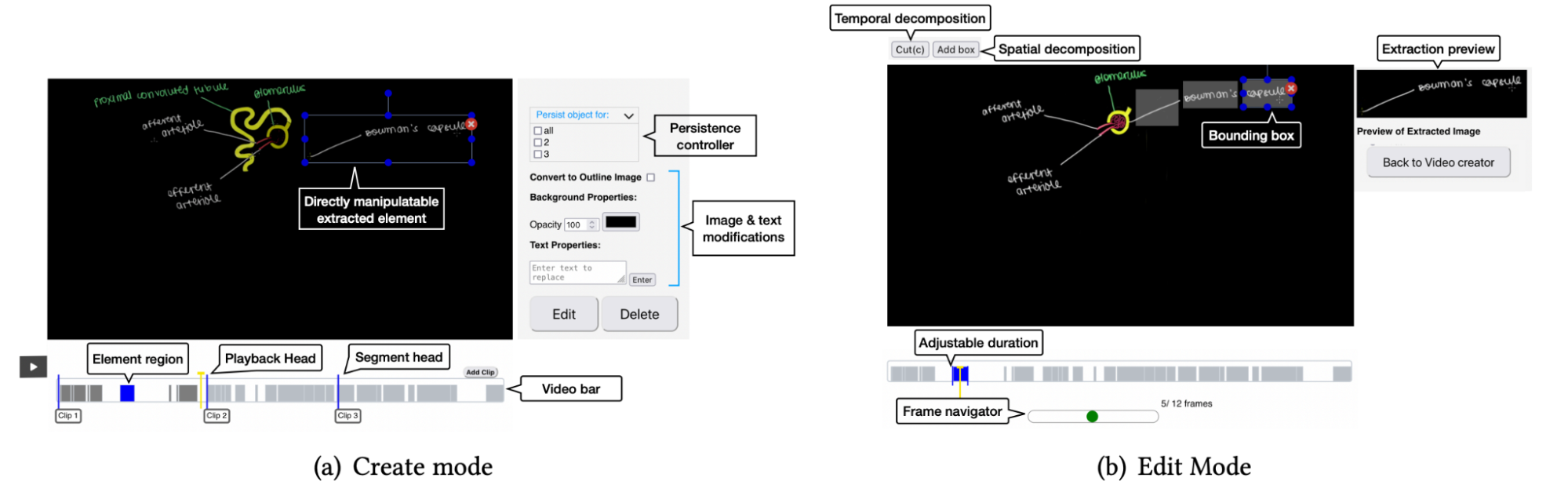

Figure 5.2: Left: (a) Compound mark technique (b) Simple mark technique. Images on the left show selection from the popup radial menu. Images on right show the same corresponding selection made using the marks alone without popping-up the menu. Right: The two modes of the VidAdapter interface: (a) Create mode, which allows direct manipulation of elements and their regions in the video bar for reorganisation and visual appearance modification, and (b) Edit Mode, which allows fine-grained spatial and temporal restructuring of the content extracted by the automatic pipeline.

For example, in the paper "Simple vs. compound mark hierarchical marking menus" ( Zhao & Balakrishnan, 2004 ), researchers developed a variant of hierarchical marking menus that uses a series of inflection-free simple marks instead of the traditional single "zig-zag" compound mark. Through pilot studies and theoretical analysis, they demonstrated that their simple mark approach could significantly increase the number of menu items that could be selected efficiently and accurately. Their user experiments showed that compared to the compound mark technique, the simple mark technique enabled faster and more accurate menu selections, particularly for menus with many items where the compound mark technique struggled. Additionally, their technique required less physical input space, making it especially suitable for small pen-based devices. This exemplifies how researchers can improve upon an existing interaction technique by making key modifications that lead to measurable performance gains.

Another example is VidAdapter ( Ram et al., 2023 ), a tool designed to improve the process of adapting lecture videos for different viewing contexts, such as mobile devices or head-mounted displays. The researchers identified that while video lectures are widely used, adapting them with traditional video editing software is a difficult and slow process. VidAdapter addresses this by automatically extracting key elements from the video (like the presenter and the blackboard content) and allowing users to directly manipulate and reorganize these elements. In their evaluation, they compared VidAdapter against traditional video editors for the task of adapting lecture videos. The results showed that VidAdapter was not only strongly preferred by experienced users but also improved the efficiency of the adaptation process by over 53%. This work is a prime example of the "be the best" approach, as it identifies a clear limitation in existing tools and provides a new solution that offers a significant and quantifiable improvement in performance for a specific, practical task.

5.3 Key Dimensions of Constructive Research in Interaction Techniques

5.3.1 "Be the First" Research in Interactive Techniques

In this section, we delve into the process of conducting "be the first" research for interaction techniques. We will first present a common "recipe"—a sequence of activities typically found in successful projects of this nature. This recipe outlines the key stages, from identifying a new capability to validating its benefits. To make this recipe more concrete, we will then walk through a case study of the "Mouth Haptics" paper, showing how it follows this pattern. Finally, we will take a closer look at one of the most critical activities in this process: the exploration of the design space.

Common Components in "Be the First" Interaction Technique Papers

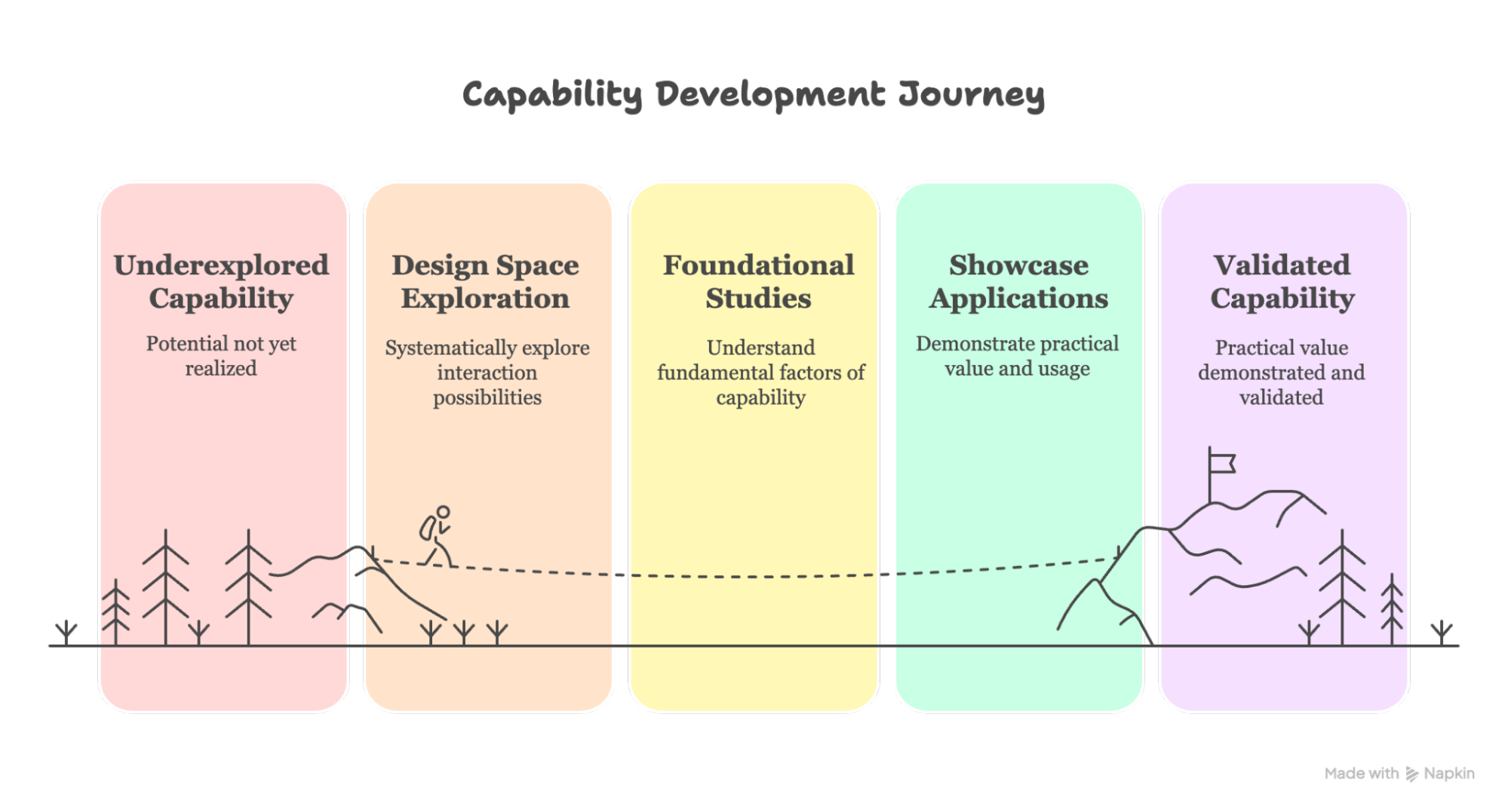

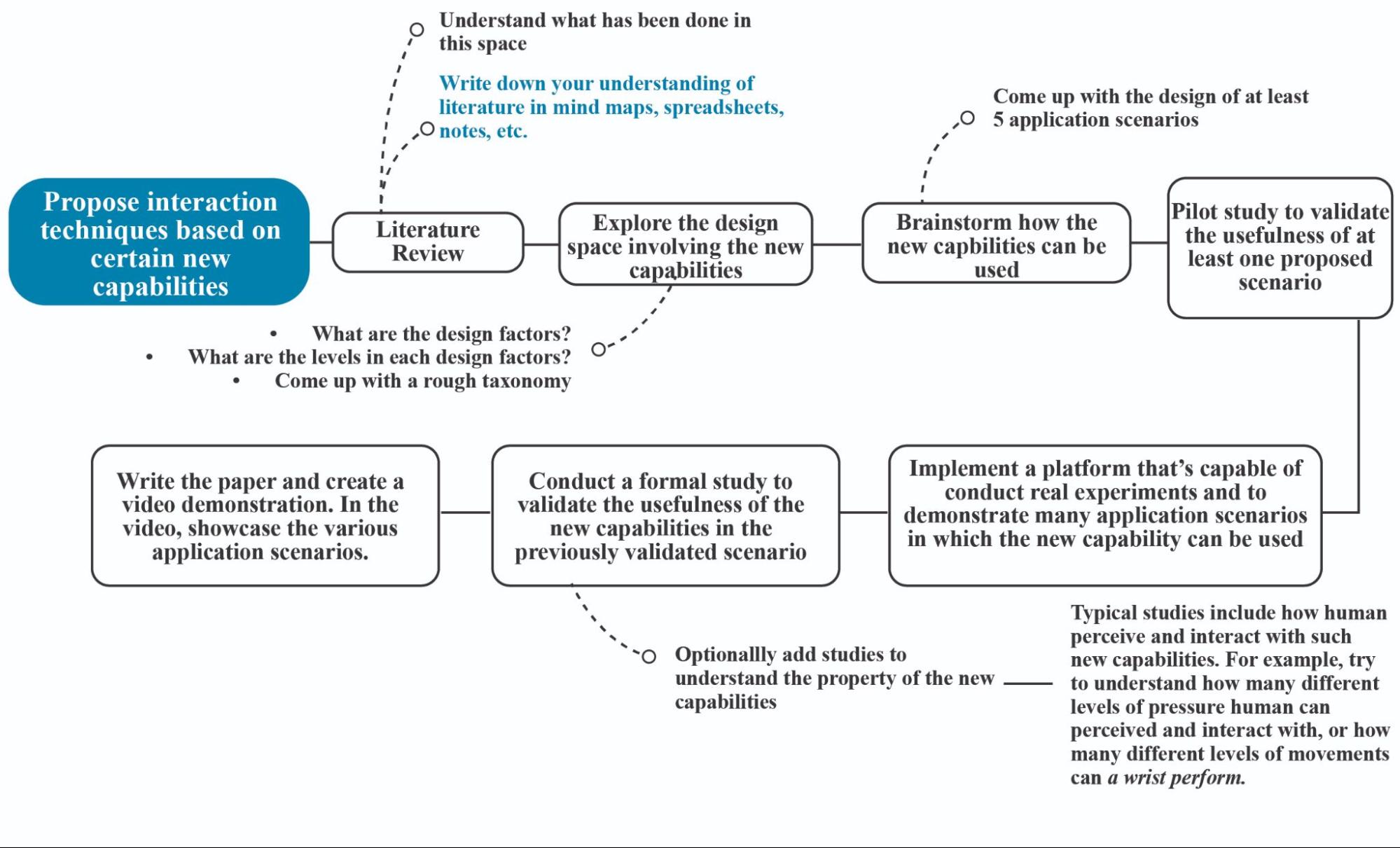

While there is no strict requirements for innovation, successful 'be the first' papers typically present a common set of components (see Figure 5.3 ). We refer to these as "components" to emphasize that they are the key contents you will typically see in a final paper, not necessarily a procedural recipe for how the research was conducted. It is crucial to understand that the research process to generate such content does not necessarily follow the same order as it appears in the paper; in fact, the workflow is often iterative and non-linear. In this section, we will discuss these key components. Later, we will cover the best practices of the workflow to obtain such content.

Figure 5.3 Recipe for Technique (be the first)

- Identify a New or Underexplored Capability: The journey begins by identifying a novel capability that has been overlooked or is newly available. This could be a new sensor, an underutilized input channel, or a new context of use. For example, Pressure Widget ( Ramos et al., 2004 ) recognized that while some styluses could sense pressure, this continuous input was largely ignored in interface design. The new capability was, therefore, "using continuous pressure as a primary input modality." Similarly, HoverWidget ( Grossman et al., 2006 ) harnessed the hover state of a digital pen — its proximity to a tablet surface without touching it—as a new channel for interaction. SLAP Widgets ( Weiss et al., 2009 ) explored the novel combination of tangible, physical widgets with the capabilities of multitouch tabletops.

- Conduct a Design Space Exploration: Once a capability is identified, the next step is to systematically explore its design space — the range of possible ways the interaction can be designed. This involves mapping out variations in input, output, and feedback. This exploration demonstrates rigor and provides a valuable map for future researchers, showing that the proposed design is a reasoned choice among many alternatives. For example, the HoverWidget paper explicitly lays out a design space for hover interactions, considering dimensions like the distance of the hover and the type of feedback provided. More examples of design space exploration are discussed later.

- Perform Foundational Studies to Understand the Capability: Before building a complete system, it is crucial to understand the fundamental factors related to the new capability. This often involves controlled experiments to answer basic questions about perception, motor control, and usability. For instance, the Pressure Widget researchers first conducted a study to answer a fundamental question: how many distinct pressure levels can a user reliably apply with a stylus? They also investigated which forms of visual feedback were most effective. This foundational knowledge is essential for designing effective interaction techniques.

- Showcase Applications and Usage Scenarios: With a solid understanding of the capability, the next step is to demonstrate its practical value. This involves designing and showcasing a set of compelling usage scenarios that make the potential of the new technique tangible. The goal is to inspire other designers and researchers by illustrating how the technique can solve real problems or enable new, expressive interactions. The papers for Pressure Widget , HoverWidget , and SLAP Widgets all feature a rich set of example applications, often accompanied by video demonstrations, to achieve this.

- Conduct a User Study to Validate Benefits: Finally, to solidify the contribution, researchers often conduct a formal user study to validate the benefits of their new technique. While "be the first" research may not have a direct competitor, the study typically compares the new technique against a baseline or the conventional way of performing a task. This provides evidence of the technique's advantages, such as improved speed, accuracy, or user satisfaction. For example, the SLAP Widgets paper included a "Knob Performance Task" that compared their tangible 'Knob' widget against standard virtual on-screen controls for video navigation. The results showed that the SLAP widget improved performance, providing concrete evidence of its value.

|

A case study illustrating the "be the first" strategy: " Mouth Haptics in VR using a Headset Ultrasound Phased Array " CHI 2022, Best Paper |

To make this recipe more concrete, let's examine how it applies to a real-world example of "be the first" research. We will use the award-winning paper, "Mouth Haptics in Virtual Reality using a Headset Ultrasound Phased Array," as a case study ( Shen et al., 2022 ). By analyzing this paper, we can see how the authors systematically followed the key stages of our recipe to introduce and validate a novel interaction technique. The following breakdown will map the paper's contributions to each of the five steps, from identifying the new capability to validating its benefits.

The "Mouth Haptics" paper introduces a novel way to enhance virtual reality (VR) experiences by providing tactile feedback directly to the user's mouth. The core idea is to use an array of ultrasonic transducers, mounted on a VR headset, to project focused airborne acoustic energy onto the user's lips, teeth, and tongue. This creates a wide range of haptic sensations — from the feeling of a spider crawling across your lips to the splash of rain on your face — without any physical contact. By targeting the mouth, one of the most sensitive parts of the human body, this technique opens up a new channel for creating deeply immersive and compelling virtual worlds.

This research is a quintessential example of the "be the first" approach. In the following sections, we will analyze the Mouth Haptics paper through the lens of our five-stage recipe to see how the authors systematically introduced and validated this new interaction technique.

New Capability in HCI

The paper introduces the novel capability of rendering haptic effects directly onto the user's mouth in virtual reality. While previous haptic research focused on handheld controllers and wearables, this work targets the mouth — an area whose high tactile sensitivity (second only to the fingertips in mechanoreceptor density) represents an untapped channel for sensory feedback. This approach opens a new avenue for creating more immersive VR experiences.

Design Space Exploration

The authors conducted a systematic exploration of the design space for mouth-based haptics. Given the narrow focus on a specific technology (ultrasound) and target area (the mouth), they were able to perform a relatively comprehensive exploration. They structured this exploration by dividing it into two key aspects: the input technology used to generate the haptics and the resulting output sensations perceived by the user.

Input Methods:

The exploration of input centered on the ultrasonic phased array. The authors considered how to configure this hardware to generate precise effects. This included systematically varying the control parameters of the array, such as the frequency, amplitude, and phase of the ultrasound waves from 64 transducers. By precisely controlling these parameters, they could manipulate the location and intensity of the focused acoustic energy on different target areas, including the lips, teeth, and tongue.

Output Modalities:

The exploration of output focused on the range of tactile sensations that could be rendered. The authors identified and designed several haptic primitives, including simple point impulses (like a tap), continuous persistent vibrations, and dynamic swipes where the focal point moves across the skin. They then explored how these basic primitives could be animated over time and along 3D paths to create more complex and meaningful effects. This allowed them to simulate a wide variety of real-world sensations, such as the feeling of a spider crawling across the lips or the splash of a raindrop, providing a valuable guide for future designers of haptic experiences.

Studies for Better Understanding the New Capabilities

When introducing a novel interaction capability, its fundamental effects on human perception and experience are often unknown. Therefore, it is common for "be the first" papers to include studies to establish this new knowledge. These studies often include formative work that investigates basic perceptual characteristics (e.g., how well can people feel it?) or compares different design variations.

The Mouth Haptics paper exemplifies this approach. To understand the basic perceptual qualities of their technique, the authors conducted a perception study with 11 participants. This study investigated the sensitivity and spatial acuity of the lips, teeth, and tongue to the ultrasonic haptic stimuli, helping to determine the most effective modulation frequencies and amplitudes for creating sensations. This provided a baseline understanding for designing effective haptic effects.

Showcase of Usage and Applications

VR Scenarios: To demonstrate the technique's potential, the authors created three interactive VR scenarios used to showcase a wide range of applications and to gather user feedback. The scenarios included: a haunted forest, where users could feel spiderwebs brushing against their face or a spider crawling on their lips; a school simulator, which rendered haptics for everyday interactions like drinking from a fountain or brushing teeth; and a racing game, which simulated environmental effects like wind, rain, and puddle splashes, as well as the impact of collisions.

Immersive Experiences: Each scenario was designed to show how mouth haptics can enhance immersion by providing tactile feedback that complements the visual and auditory cues. By translating virtual events—from the subtle vibration of a cigarette to the startling splash of goo—into physical sensations on the mouth, the showcase makes the technique's value tangible and provides compelling use cases for creating richer, more believable virtual worlds.

User Study to Show its Advantage

Validation of Technique: A user study with 8 participants compares the VR experience with and without mouth haptics. The study evaluates the benefits of the proposed technique in terms of immersion, realism, and user preference.

Comparative Analysis: The user study includes questionnaires that assess the effectiveness of mouth haptics against traditional non-haptic VR experiences. It measures the impact on user experience dimensions such as autotelic, expressivity, immersion, realism, and harmony.

Results and Conclusion: The findings indicate that mouth haptics significantly enhance the VR experience, with participants preferring the haptic condition over non-haptic scenarios. The study concludes that mouth haptics could be a valuable addition to consumer VR systems, offering a new dimension in sensory feedback.

The Mouth Haptics paper serves as an exemplary case study for the "be the first" research recipe, demonstrating how its components work together to build a comprehensive contribution for a new interaction technique. The paper does not merely introduce a novel capability in isolation. Instead, it systematically investigates the design space to map out the possibilities for future designers. This exploration is then complemented by perception studies , answering the HCI question of what users can actually feel. Building on this foundational understanding, the showcase of applications makes the technique's value tangible and compelling by demonstrating its use in rich, interactive scenarios. Finally, the user study provides the validation, offering empirical evidence that the new technique delivers on its promise by measurably improving the user experience. Each stage synergistically builds upon the others—from the initial concept and its technical scope to its perceptual grounding, practical potential, and validated benefits. This comprehensive approach provides a full picture of the new contribution, which is why these stages are common to "be the first" research.

Design Space Exploration for be the first Interaction Techniques**

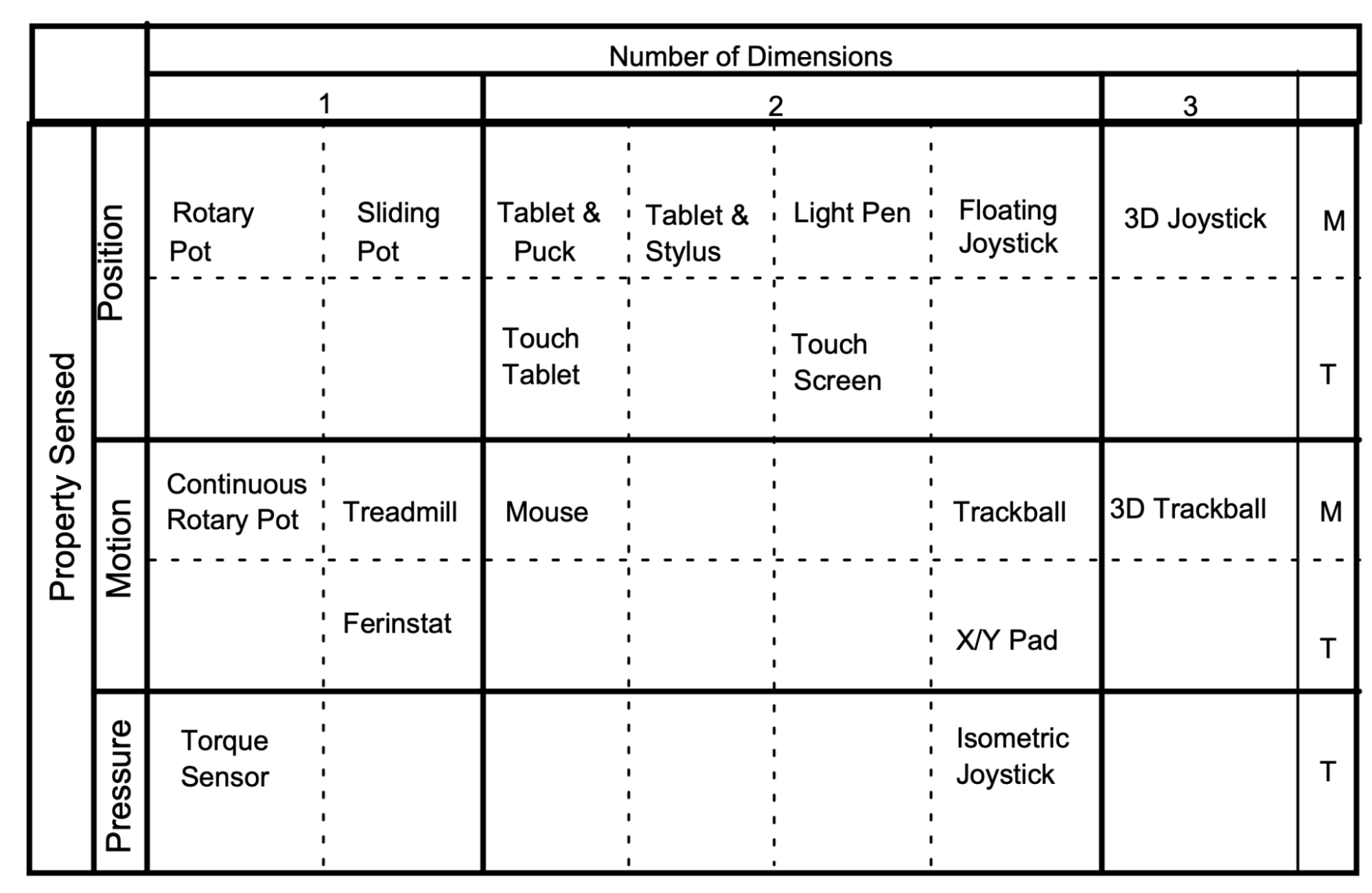

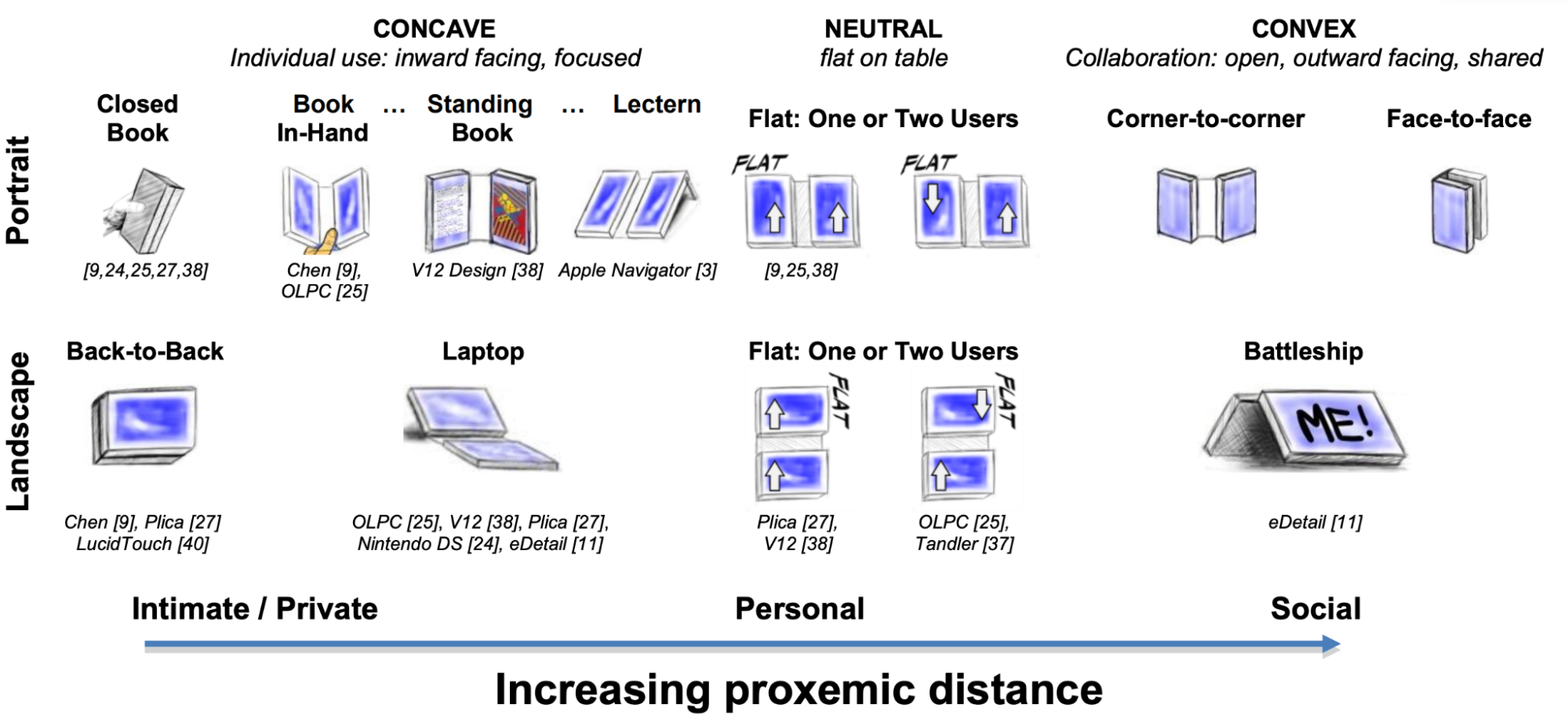

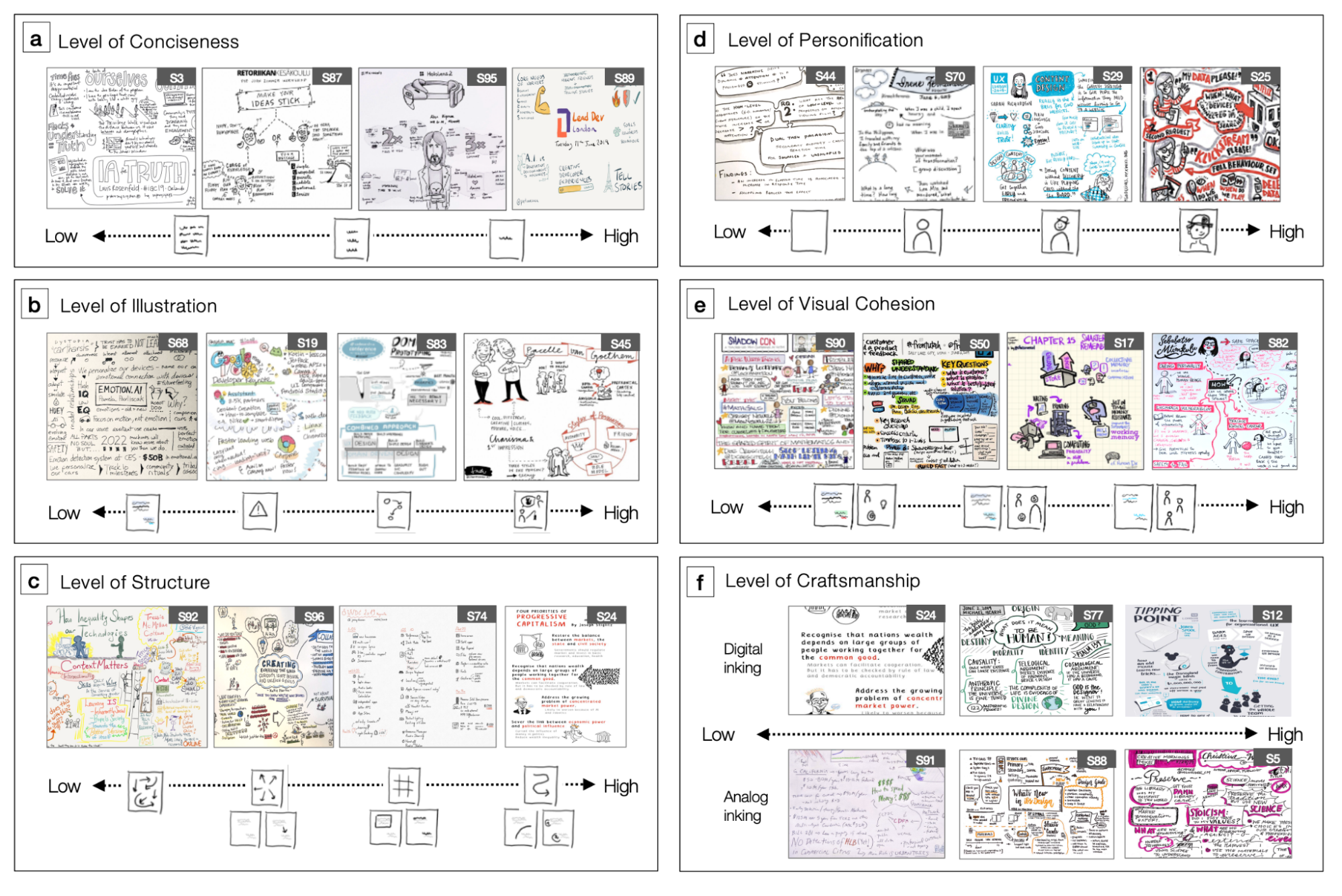

Design space exploration is a common and critically important component of "be the first" research projects. However, novice researchers often find this task challenging. A good design space exploration is characterized by well-defined and logical factors; it should be systematic and relevant without being overly complex. To help you create one, this section provides several examples and outlines concrete steps.

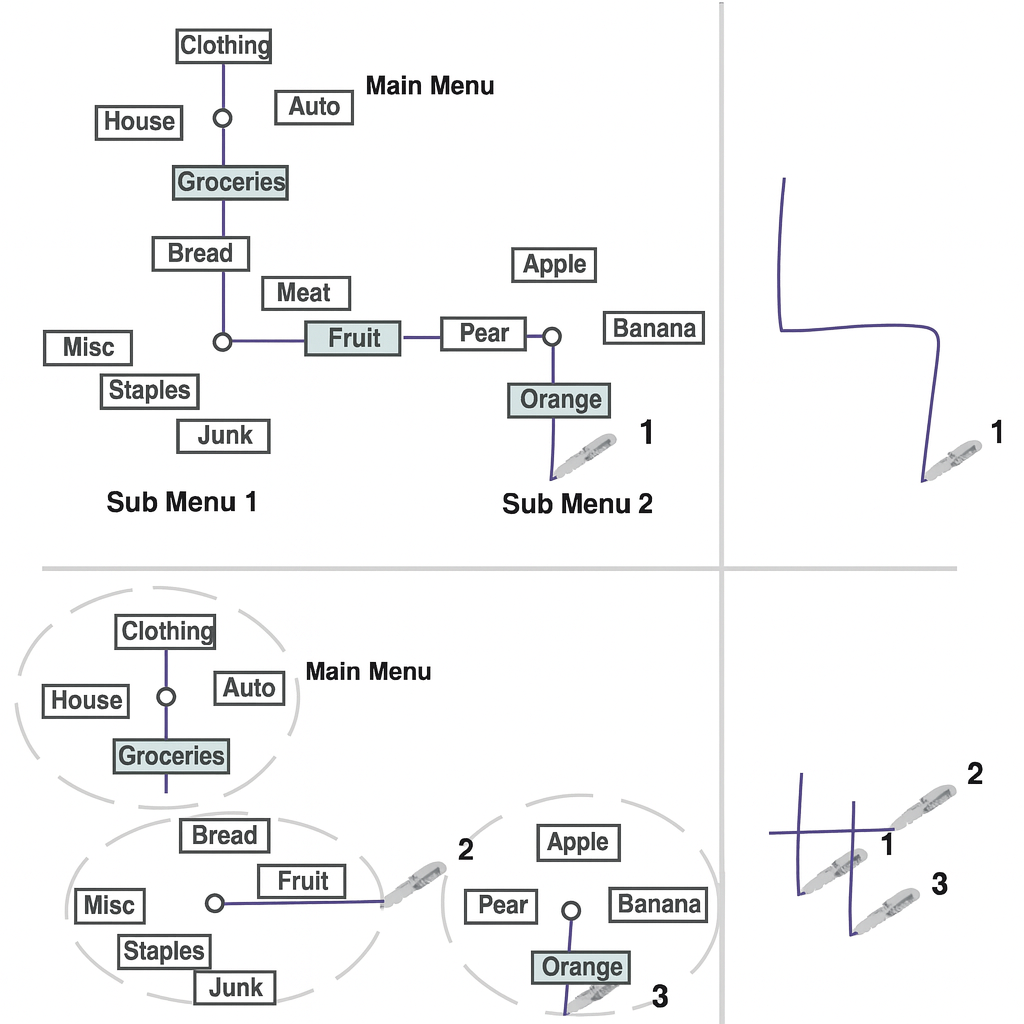

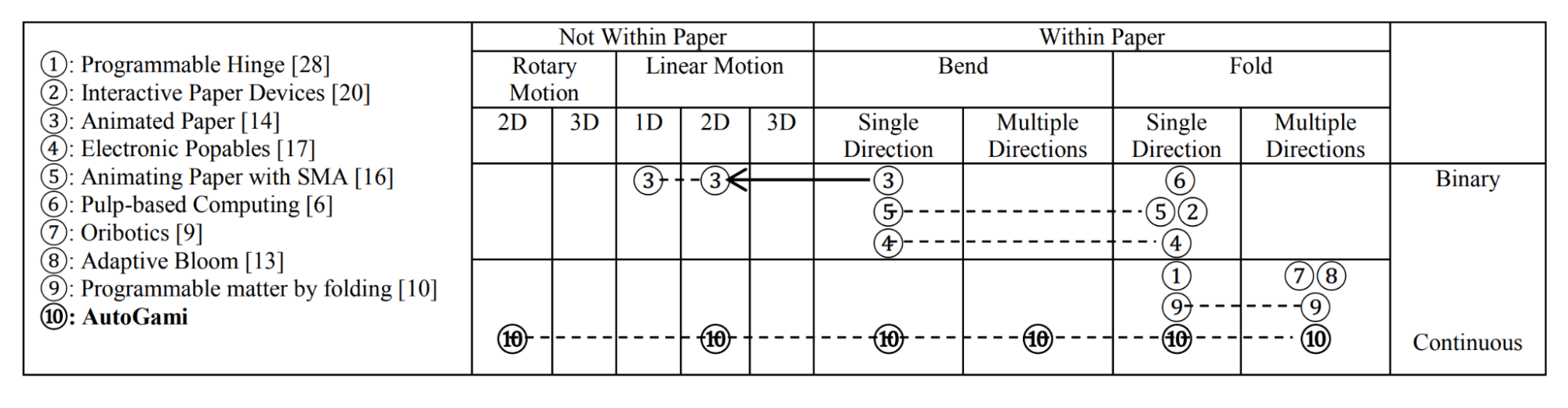

Figure 5.4: Top Left : Taxonomy of Input Devices ( Buxton, 1983 ); Top Right : Design space of dual-screen postures, for individual and collaborative use, supported by the Codex ( Hinckley et al., 2009 ). Middle: Levels of Sketchnote Design Space: Conciseness, Illustration, Structure, Personification, Cohesion, and Craftsmanship ( Zheng et al., 2021 ). Bottom : Comparison of related works using the taxonomy of paper movement ( Zhu and Zhao, 2013 ).

Here are steps to create effective design space mappings:

- Identify Core Dimensions or Factors: Start by determining the fundamental dimensions or factors that define your research space. These can be identified through logical reasoning about what aspects are most relevant to your problem, or by conducting a literature review to see how prior work has characterized the space. For example, in gestural interface research, core factors might include input modality (e.g., hand, finger, full body), sensing technology (e.g., camera, IMU, capacitive), and feedback type (e.g., visual, audio, haptic). Whether derived from first principles or from existing literature, these dimensions should capture the essential ways in which different approaches or solutions vary within your area of study.

- Structure the Space: Arrange your dimensions so that their conceptual relationships are clear and interconnected, forming a coherent bigger picture rather than a collection of unrelated factors. The goal is to show how each dimension relates to and interacts with the others, revealing the underlying structure of the design space. Use representations diagrams to help illustrate how the dimensions combine, influence, or depend on each other. This approach ensures that the design space is not just a list of arbitrary axes, but a meaningful framework that explains how the parts fit together to define the possibilities in your research area.

- Validate and Refine: Share your design space with colleagues and domain experts. Their feedback can help identify missing dimensions, clarify ambiguous classifications, and strengthen the overall organization. Through this iterative refinement process, ensure the design space serves its intended purpose — whether that is to capture the current state of knowledge, suggest promising new research directions, or simply provide a useful lens for thinking about and systematically exploring relevant factors in your research area. The ultimate goal of this iterative design is to arrive at a systematic, insightful conceptual framework that can guide both your own research and that of others in the field.

Summary: In summary, effective design space exploration involves (1) identifying the core dimensions or factors that define your research area, (2) structuring these dimensions to reveal their relationships and the overall landscape, and (3) validating and refining the space through expert feedback and iteration. This process is a critically important activity for "be the first" interaction technique research, as it not only clarifies the possibilities and boundaries of your new contribution, but also provides a foundation for future innovation and systematic inquiry.

The Ideal Workflow

While understanding the common components of a "be the first" paper is a great start, a frequent challenge for novice researchers is knowing how to produce them. What is the most effective process? Which step should come first? Without a clear roadmap, it is easy to misallocate effort—for example, by spending months perfecting a technical implementation before validating whether the core concept is even useful or interesting to users. This can lead to significant wasted time on ideas that are ultimately not viable.

To address this, we present an ideal workflow based on years of experience conducting this type of research (See Figure 5.5 ). This workflow is not just a sequence of steps; it is a strategic approach designed to de-risk research projects by front-loading low-cost validation activities. The core principle is to test the most critical assumptions as early and cheaply as possible, ensuring that significant time and resources are only invested in ideas that have demonstrated promise. The following section outlines this workflow, explaining each step and the rationale behind its placement in the sequence to help you avoid common pitfalls and maximize your research efficiency.

Figure 5.5: The “ideal” workflow of “an” interaction technique project ( be the first )

Here are the key steps and their rationale:

- Propose Interaction Techniques Based on New Capabilities

- Starting point: Identify emerging technologies or capabilities that haven't been fully explored in an HCI context.

- Best practice: Pursue genuinely novel capabilities, either by creating new hardware yourself or by collaborating with other fields—like material science or engineering—to adapt their cutting-edge technologies for HCI.

- Literature Review

- Purpose: Understand the state of the art and identify research gaps. Be comprehensive in reviewing both academic literature and industry developments.

- Activities: Create mind maps, spreadsheets, and notes to document your understanding. See the literature review chapter for more details.

- Explore the Design Space

- Key activities:

- Identify key design factors for the new capability.

- Define the possible levels for each factor.

- Develop a preliminary taxonomy of potential interaction techniques. Keep this brief, as the core value of the capability has not yet been validated.

- Best practice: Systematically map out the design space to ensure relative comprehensive coverage.

- Brainstorm Applications

- Goal: Brainstorming and piloting form a key, iterative cycle. The first part is to generate diverse usage scenarios (e.g., at least 5) to demonstrate the potential HCI value and versatility of your new capability. These scenarios are critical for convincing others that your investigation is valuable.

- Best practice: Explore broadly across different domains to find applications that show wider implications. This step feeds directly into pilot studies, which aim to identify a "winning case."

- Pilot Study

- Purpose: To quickly validate brainstormed scenarios and find at least one "winning case"—an application where the new capability offers clear benefits over existing methods.

- Method: Use low-cost techniques like Wizard of Oz (WoZ) studies to test concepts before full implementation.

- Best practice: Iterate rapidly between brainstorming and piloting. This cycle allows you to efficiently gather evidence of usefulness and identify the strongest application before investing significant time in platform development.

|

Key Checkpoint: The brainstorming and piloting cycle is a critical checkpoint. The subsequent steps — implementation and formal studies — represent the start of the formal investigation and require a tremendous amount of time and effort. Before moving forward, you must be confident that you have identified at least one "winning case" and can demonstrate a sufficient number of interesting applications. This ensures you are investing significant resources only after validating that your research direction has a strong, promising foundation. |

- Implement a Demonstration Platform

- Purpose: Create a platform capable of conducting real experiments.

- Requirements: Must demonstrate multiple application scenarios.

- Best practice: Build something robust enough for formal evaluation but focused on the key innovation.

- Conduct Formal Study

- Purpose: Rigorously validate the usefulness of the new capabilities.

- Optional: Add studies to understand specific properties of the new capabilities.

- Examples: Study how humans perceive different levels of pressure or movement ranges.

- Best practice: Design experiments that isolate the impact of your innovation.

- Documentation and Demonstration

- Deliverables: Academic paper and video demonstration.

- Content: Showcase various application scenarios in the video.

- Best practice: Clearly communicate both the technical innovation and its practical impact.

This workflow is specifically designed to identify non-viable research directions as early as possible, before significant resources are invested. Each step builds on previous validation, ensuring that time and effort are only spent on promising directions with clear research contributions. The sequence moves from low-cost exploratory activities to more resource-intensive implementation and studies, maximizing research efficiency while minimizing the risk of pursuing dead ends.

A Note on Execution: The Art Behind the Science

While the workflow outlined above provides a structured path for "be the first" research, successfully navigating it is an art that requires experience. The steps offer a map, but the journey is filled with nuanced decisions that are difficult to codify.

For beginner researchers, it is crucial to follow this process in close consultation with an experienced advisor or mentor. An expert can help you recognize a "winning case" from a dead end, judge when a pilot study has yielded enough evidence, and avoid common pitfalls that can lead to significant wasted time and effort.

Often, the true value of this rigorous, front-loaded validation process is only fully appreciated in hindsight. Many researchers only realize the wisdom of systematically de-risking a project after completing one or more projects, some of which may have consumed months or years before a fatal flaw was discovered. By embracing this workflow early, you can build on a foundation of validated ideas and maximize your chances of impactful innovation.

5.3.2 "Be the Best" Research in Interactive Techniques

While the "be the first" strategy focuses on creating entirely new interaction capabilities, as demonstrated in the Mouth Haptics example above, the "be the best" strategy takes a different approach by improving upon existing interactive techniques that address known problems in HCI.

Figure 5.6 Recipe for Technique (be the best)

Common Components in "Be the Best" Interaction Technique Papers

Similar to the "be the first" recipe, this approach involves a systematic process of design and evaluation. However, the emphasis shifts from exploring a new frontier to outperforming established solutions for a known problem. This requires a different focus, particularly on rigorous comparison and clear evidence of improvement (see Figure 5.6 ). The key stages are:

- Identify a Well-Known Problem and Analyze State-of-the-Art Limitations: Instead of inventing a new capability, start by identifying a recognized and important problem in HCI. Conduct a thorough review to understand the existing state-of-the-art techniques that address this problem and, crucially, analyze their specific, measurable limitations. This forms the core motivation for your work.

- Design a Novel Solution with a Clear Hypothesis: Based on the limitations you identified, design a new interaction technique. Your design's novelty should be directly aimed at overcoming those specific drawbacks. This should be guided by a clear hypothesis: a testable claim about why and how your new approach will be superior to existing ones.

- Implement the Novel Technique and Key Comparators: Build an implementation of your proposed solution. To enable a fair comparison, you must also implement or obtain access to the most relevant state-of-the-art competitor(s). The quality of all implementations is critical for a valid evaluation.

- Conduct a Rigorous, Comparative User Study: This is the heart of a "be the best" paper. Design a controlled experiment that directly compares your technique against the established state-of-the-art. The study must be carefully designed to isolate the variable of interest, using well-defined tasks and metrics (e.g., speed, accuracy, error rate, user satisfaction) that allow for a direct, quantitative comparison. Unlike the more exploratory studies common in "be the first" research, the goal here is to produce definitive evidence of superiority.

- Provide a Comprehensive Explanation for the Improvement: It is not enough to simply show that your technique is better (e.g., "20% faster"). You must explain why . Analyze both your quantitative and qualitative data to build a compelling argument that links your design choices back to the observed performance or experience benefits. This analysis should validate your initial hypothesis and provide generalizable insights that can guide future designers.

|

A case study illustrating the "be the best" strategy: " Emoballoon-conveying emotional arousal in text chats with speech balloons " CHI 2022 |

EmoBalloon is a system designed to enrich text-based chat by visually conveying the sender's emotional arousal ( Aoki et al., 2022 ). Instead of relying on static emojis, it analyzes the sender's voice input to dynamically generate expressive speech balloons that change in shape and animation to reflect the emotional tone. This project serves as an example of the "be the best" strategy as illustrated below

1. Identify a Well-Known Problem and Analyze State-of-the-Art Limitations Text-based chat applications are integral to daily communication but suffer from a well-known problem: the inability to convey emotional tone effectively. This can lead to misunderstandings in social and professional contexts. The current state-of-the-art solutions involve using emoticons, stamps, emotional typefaces, and animated text to enhance emotional information. However, these solutions have clear limitations: they are static, require manual selection disrupting conversation flow, and their interpretation can be subjective and culturally dependent.

2. Design a Novel Solution with a Clear Hypothesis To overcome these limitations, the EmoBalloon project investigated how emotionality is conveyed visually in Japanese manga. By analyzing the relationship between speech balloon shapes and emotional content in text, they identified a visual language for expressing emotional arousal. Based on these findings, they designed a novel technique to automatically generate expressive speech balloons. The design was guided by a clear hypothesis: that speech balloons whose shapes correspond to emotional arousal would convey the sender's emotional state more accurately than manually selected, static emojis.

3. Implement the Novel Technique and Key Comparators The EmoBalloon system was implemented as a functional voice input text chat application. The core of the system was a model trained on data extracted from the Manga109 dataset. This model, an Auxiliary Classifier Generative Adversarial Network (ACGAN), could automatically generate speech balloons with shapes that visually represented a specified level of emotional arousal. The system generated a balloon shape based on the detected speech volume, corresponding to the level of arousal. For comparison, the key state-of-the-art competitors were also implemented: a traditional text chat interface allowing users to select from a standard set of emoticons and a manual selection version of EmoBalloon where users could choose a speech balloon using a slider.

4. Conduct a Rigorous, Comparative User Study A controlled experiment was designed to directly compare EmoBalloon against the traditional emoticon interface and the manual selection EmoBalloon interface. In the study, Japanese participants engaged in scripted role-play scenarios, acting as both senders and receivers. After each message was sent, both participants rated the emotional arousal and valence of the message using the Self-Assessment Manikin (SAM) test. This allowed the researchers to measure the difference in perceived emotional arousal between the different interfaces.

5. Provide a Comprehensive Explanation for the Improvement The results of the user study demonstrated that EmoBalloon was significantly better at conveying emotional arousal. It measurably reduced the discrepancy between the sender's intended arousal and the receiver's perception of it, confirming the initial hypothesis. The analysis explained why this improvement occurred: the system leveraged a visual language of balloon shapes, derived from Japanese manga, that provided a more nuanced and continuous representation of emotional arousal than discrete, manually selected emoticons. Furthermore, EmoBalloon specifically communicated arousal without altering the emotional valence of the text, unlike emoticons, which can ambiguously affect both. This provided a clear, evidence-based explanation for the technique's superiority.

EmoBalloon demonstrates a "be the best" approach in HCI by addressing a well-known problem with a novel solution that is both technically innovative and user-centered. The carefully designed user study provides compelling evidence that the proposed technique enhances emotional communication in text chats, offering a more immersive and accurate reflection of the sender's emotional state.

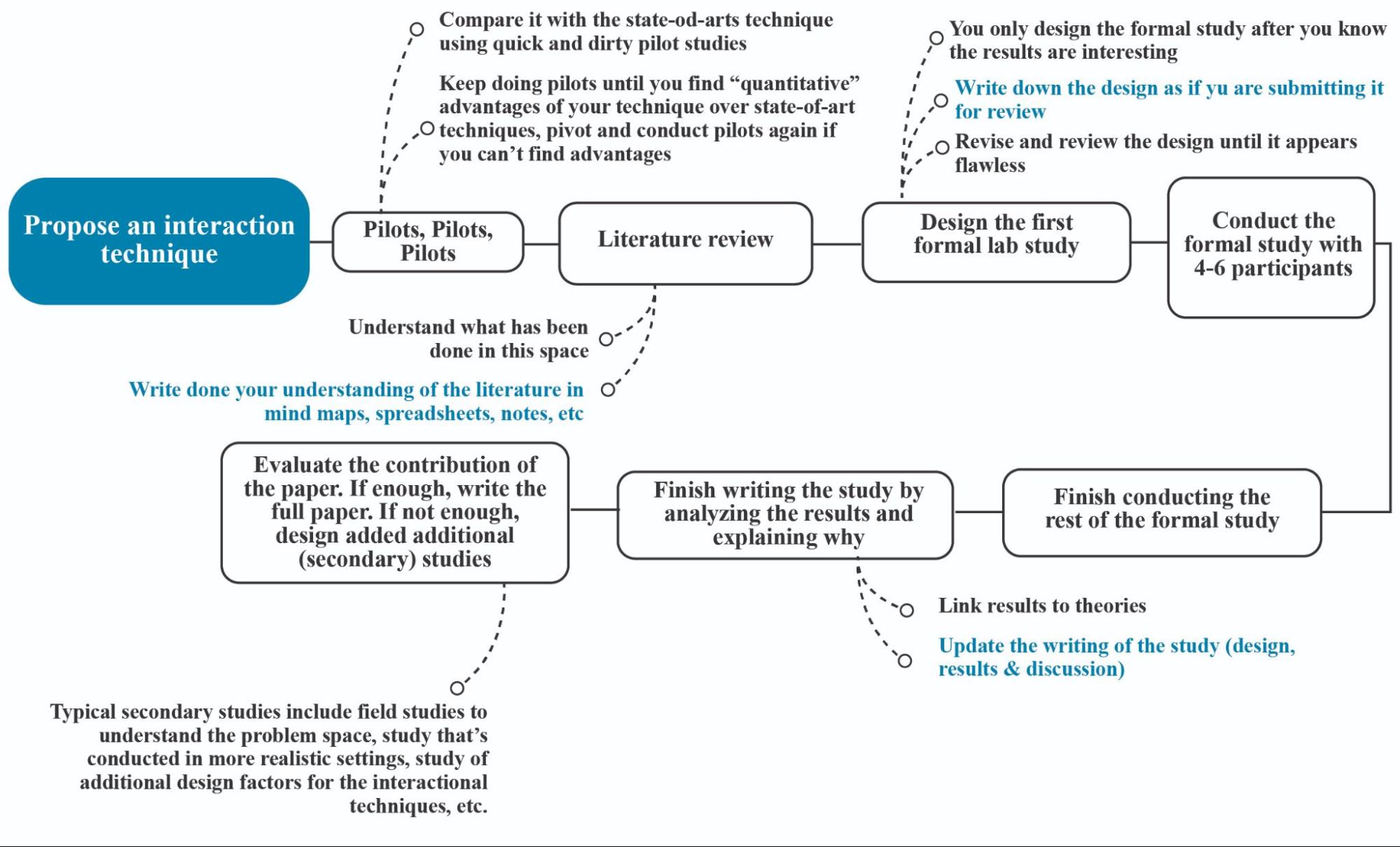

The Ideal Workflow

Figure 5.7: The “ideal” workflow of “an” interaction technique project ( be the best )

Best Practices for "Be the Best" Interaction Technique Research in HCI (See Figure 5.7 ). Here's a detailed explanation of each component:

1. Propose an Interaction Technique

- Starting point: This typically starts with a hunch. For example, you might notice that a state-of-the-art interaction technique is suboptimal and have a reason to believe it can be improved. This insight triggers your investigation to design a better alternative.

2. Pilots, Pilots, Pilots

- Purpose: To determine if your new design shows promise for outperforming the current state-of-the-art.

- Key activities:

- Conduct rapid, informal pilot studies comparing your technique against state-of-the-art competitors.

- Iterate on your design, continuing to pilot until you find consistent, quantitative advantages.

- Be prepared to pivot or modify your approach if advantages do not emerge.

- Note: It is crucial to remain objective and know when to stop. Sometimes, the state-of-the-art is simply hard to beat. Avoid becoming overly attached to an idea that isn't showing promise after several iterations. If there is still no clear advantage, be ready to abandon the approach and change direction. This prevents wasting valuable time and energy on a fruitless endeavor.

|

Key Milestone: The Go/No-Go Decision You must have consistent, quantitative evidence from pilot studies demonstrating that your technique outperforms the state-of-the-art. This evidence provides the strong justification required to proceed with a formal, rigorous investigation. Reaching this milestone marks the transition from informal piloting to the formal scientific process detailed in the following steps. |

3. Literature Review

- Purpose: Thoroughly understand the research landscape.

- Activities:

- Document your understanding through mind maps, spreadsheets, and notes.

- Identify what has already been done in this space.

- Best practice: Ensure comprehensive knowledge of related work to position your contribution appropriately.

4. Design the First Formal Lab Study

- Prerequisites: Only proceed when you know your technique has quantitative advantages.

- Process:

- Write the design as if submitting for peer review.

- Review and revise until the design appears flawless.

- Best practice: Craft a methodologically sound study that will withstand scientific scrutiny.

5. Conduct the Formal Study

- Scope: Typically with 4-6 participants initially.

- Documentation: Write down the study results as if preparing for submission.

- Review process: Refine the writing and revise if needed until everything is clear.

- Best practice: Ensure proper experimental controls and data collection. This is similar to what you have learned in empirical research.

6. Finish Conducting the Rest of the Formal Study

- Scale: Expand to the full participant pool (typically 12-24 participants).

- Best practice: Maintain consistent protocols across all participants.

7. Finish Writing the Study

- Key components:

- Analyze the results thoroughly.

- Explain why the outcomes occurred.

- Link results to theories.

- Update all sections (design, results, discussion).

- Best practice: Ensure robust statistical analysis and theoretical grounding.

- Note: It is not enough to show that your technique is better. A true research contribution must also explain why it performs better, as this is the source of new knowledge. To build this explanation, connect your results to established theories and investigate the underlying causes by interviewing users, trying the technique yourself, and performing deeper analysis. Your contribution is only solidified once you can convincingly articulate the reasons behind your results.

8. Evaluate the Contribution

- Decision point: Assess if the findings constitute a sufficient research contribution. A paper is judged on its overall contribution, and a single study may not generate enough new knowledge to stand alone.

- Options:

- If sufficient: Write the full paper.

- If insufficient: Design secondary studies to generate additional knowledge and strengthen the contribution.

- Secondary studies may include:

- Field studies to evaluate the technique in realistic settings.

- Studies exploring additional design factors or parameters of the technique.

- Longitudinal studies to assess learnability over time.

- Best practice: Be honest about the limitations of your work. Addressing them with further studies builds a more robust and compelling paper.

9. The Challenge and Reward of "Be the Best"

It is worth noting that the "Be the Best" path has become increasingly challenging over time. After decades of HCI research, state-of-the-art interaction techniques for many common tasks have reached a very high level of performance, making it difficult to create a new technique that is demonstrably superior. This reality makes this research route harder to pursue successfully.

However, this difficulty should not be a deterrent. With a curious mind and a keen eye for subtle inefficiencies or new technological affordances, researchers can still identify opportunities for meaningful improvement. And when a winner is found, writing a "Be the Best" paper is arguably one of the most straightforward routes to a high-impact publication. The narrative is clear and powerful: a head-to-head comparison demonstrating quantitatively that your technique outperforms the best-known alternative.

Unifying Principles: "First" vs. "Best"

While "Be the First" and "Be the Best" represent distinct contribution types, they are not mutually exclusive and share fundamental research principles. Understanding their relationship is key to producing high-impact work. A common question is how these two approaches differ and what underlying principles they share.

- Core Challenge Distinction :

- "Be the First" : The primary challenge is demonstrating novelty and potential . This research introduces a new interaction paradigm, input modality, or technological capability. The evaluation focuses on proving that the new concept is viable, opens up new possibilities, and is worthy of further exploration. The comparison is often against a baseline of "not being possible before" or a fundamentally different approach.

- "Be the Best" : The primary challenge is demonstrating superior performance . This research takes an existing, understood problem and provides a solution that is measurably better (e.g., faster, more accurate, less error-prone, or preferred by users) than the current state-of-the-art. The evaluation requires a direct, rigorous, and quantitative comparison against the strongest existing competitors.

- Shared Foundations of Rigor : Despite their different goals, both approaches are built on the same foundation of scientific rigor. This is the most critical common aspect.

- Deep Literature Review: You cannot claim to be "first" without knowing what has come before, nor can you claim to be "best" without knowing the current benchmark. A comprehensive understanding of prior work is non-negotiable.

- Quantitative Evidence: Both contribution types move beyond subjective claims. Whether proving the potential of a new idea or the superiority of an existing one, the argument must be supported by quantitative data gathered through carefully designed studies. The "Go/No-Go" milestone, based on pilot data, is critical for both.

- Methodological Soundness: The value of the contribution rests on the quality of the evaluation. Both require well-designed experiments, clear hypotheses, controlled variables, appropriate statistical analysis, and transparent reporting.

In essence, "Be the First" research creates a new race, while "Be the Best" research aims to win an existing one. Both require the same discipline, training, and rigorous execution to successfully convince the research community of their value.

5.4 Conclusion: The Value of Interaction Technique Research

Research into novel interaction techniques represents a vital contribution to the field of HCI. Interaction techniques are standardized sequences of actions that users perform to accomplish specific interaction goals. These techniques form the fundamental building blocks of human-computer interaction, providing consistent ways for users to manipulate and control interfaces. Common examples include:

- Pointing and Selection : Using a cursor or touch input to indicate and activate interface elements

- Menu Navigation : Traversing hierarchical menu structures to access commands and options

- Panning and Scrolling : Moving through content that extends beyond the visible viewport

- Zooming : Adjusting the scale and detail level of displayed information

- Dragging and Dropping : Moving objects by selecting, dragging, and releasing them in new locations

- Text Entry : Inputting text through keyboards or other text input methods

Due to their focused scope and relatively simple nature compared to complete systems, interaction techniques enable and demand thorough investigation. The core value proposition lies in developing new, more effective ways to perform common interactions that can be widely applied. This focused scope means researchers must comprehensively validate the technique's benefits through rigorous studies that examine all relevant factors and contexts.

There are two main paths to making valuable contributions in this space:

- Be the First Contributions - These emerge from exploring entirely new interaction capabilities or contexts, such as:

- Investigating novel input dimensions like pressure, hover, force, or tilt

- Designing techniques for emerging platforms like VR/AR

- Enabling previously impossible interactions through new technology

- Be the Best Contributions - These focus on measurably improving existing interaction paradigms through careful refinement and validation against state-of-the-art alternatives.

The need for interaction technique research continues to grow as new technologies, platforms and use cases emerge. Each new context brings unique constraints and opportunities that demand novel interaction solutions. However, it's important to note that interaction techniques are just one component of constructive design research. When tackling more complex creative tasks like sketching, animation, modeling or writing, a more comprehensive systems-level approach is often required. The research strategies and workflow discussed here need to be adapted accordingly for such broader system design challenges - a topic we will explore in depth in the next chapter on systems and toolkits.

Through careful study of interaction techniques, HCI researchers can develop fundamental building blocks that enhance human-computer interaction across many applications. This makes interaction technique research an enduring and valuable contribution to the field.

Having explored the design and evaluation of individual interaction techniques, we now turn our attention to a different scale of constructive research. The following chapter will discuss the contribution type of "Systems and Toolkits," where the focus shifts from single techniques to integrated systems and enabling platforms that support novel forms of HCI.

References

Grossman, T., & Balakrishnan, R. (2005, April). The bubble cursor: enhancing target acquisition by dynamic resizing of the cursor's activation area. In Proceedings of the SIGCHI conference on Human factors in computing systems (pp. 281-290).

Zhao, S., Dragicevic, P., Chignell, M., Balakrishnan, R., & Baudisch, P. (2007, April). Earpod: eyes-free menu selection using touch input and reactive audio feedback. In Proceedings of the SIGCHI conference on Human factors in computing systems (pp. 1395-1404).

Chen, L., Wang, H., Charles, Z., & Papailiopoulos, D. (2018, July). Draco: Byzantine-resilient distributed training via redundant gradients. In International Conference on Machine Learning (pp. 903-912). PMLR.

Bako, H. K., Varma, A., Faboro, A., Haider, M., Nerrise, F., Kenah, B., ... & Battle, L. (2023, March). User-driven support for visualization prototyping in D3. In Proceedings of the 28th International Conference on Intelligent User Interfaces (pp. 958-972).

Ramos, G., Boulos, M., & Balakrishnan, R. (2004, April). Pressure widgets. In Proceedings of the SIGCHI conference on Human factors in computing systems (pp. 487-494).

Apitz, G., & Guimbretière, F. (2004, October). CrossY: a crossing-based drawing application. In Proceedings of the 17th annual ACM symposium on User interface software and technology (pp. 3-12).

Zhao, S., & Balakrishnan, R. (2004, October). Simple vs. compound mark hierarchical marking menus. In Proceedings of the 17th annual ACM symposium on User interface software and technology (pp. 33-42).

Ram, A., Xiao, H., Zhao, S., & Fu, C. W. (2023). VidAdapter: Adapting Blackboard-Style Videos for Ubiquitous Viewing. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies, 7(3), 1-19.

Grossman, T., Hinckley, K., Baudisch, P., Agrawala, M., & Balakrishnan, R. (2006, April). Hover widgets: using the tracking state to extend the capabilities of pen-operated devices. In Proceedings of the SIGCHI conference on Human Factors in computing systems (pp. 861-870).

Weiss, M., Wagner, J., Jansen, Y., Jennings, R., Khoshabeh, R., Hollan, J. D., & Borchers, J. (2009, April). SLAP widgets: bridging the gap between virtual and physical controls on tabletops. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (pp. 481-490).

Shen, V., Shultz, C., & Harrison, C. (2022, April). Mouth haptics in VR using a headset ultrasound phased array. In Proceedings of the 2022 CHI Conference on Human Factors in Computing Systems (pp. 1-14).

Hinckley, K., Dixon, M., Sarin, R., Guimbretiere, F., & Balakrishnan, R. (2009, April). Codex: a dual screen tablet computer. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (pp. 1933-1942).

Zheng, R., Fernández Camporro, M., Romat, H., Henry Riche, N., Bach, B., Chevalier, F., ... & Marquardt, N. (2021, May). Sketchnote components, design space dimensions, and strategies for effective visual note taking. In Proceedings of the 2021 CHI Conference on Human Factors in Computing Systems (pp. 1-15).