Chapter 6: System and Toolkits, Evaluation Methods, and Overall Summary

In the previous chapter, we explored the foundations of constructive research and introduced the two research approaches. Building on that foundation, this chapter, we turn our attention to interactive systems and toolkits, which represent the other major category of constructive research in HCI. Unlike interaction techniques, which focus on optimizing specific, well-defined user actions, systems and toolkits are broader in scope and ambition.

6.1 Interactive Systems

6.1.1. Brief Overview of Interactive Systems

Interactive systems, within the context of Human-Computer Interaction (HCI), are computer systems designed to help users accomplish specific, often complex, tasks by facilitating interaction between humans and technology. While a strict definition is elusive, interactive systems can generally be distinguished from interaction techniques using the following heuristics:

Interactive systems tend to:

- Address user goals at the application level.

- Combine multiple components into a coherent experience.

- Handle complex workflows from start to finish.

- Have identifiable use cases and user journeys.

Interaction techniques tend to:

- Solve specific sub-problems within larger systems.

- Function as components or building blocks.

- Focus on one particular aspect of interaction.

- Be reusable across different systems.

However, the distinction is not always clear-cut. A sophisticated technique can evolve into a user-facing application, while a modular system might be embedded as a component within a larger one. Ultimately, context determines whether an artifact is viewed as a system or a technique; what is a complete system to one user may be just a component in another's workflow. Examples of interactive systems include: SandCanvas ( Kazi et al., 2011 ), Draco ( Kazi et al., 2014 ), VidAdaptor ( Ram et al., 2023 ), Prefab ( Dixon & Fogarty et al., 2010 ), BumpTop ( Agarawala & Balakrishnan, 2006 ) , CrossY ( Apitz & Guimbretière, 2004 ), etc.

Systems are much more complex and support a wide range of functionalities, which makes them difficult to describe and evaluate comprehensively.

It is well known and openly discussed in the HCI community that system research is often regarded as more difficult to publish ( Nebeling, 2017 ). This is not only because building a system typically requires a much greater investment of time and effort — often at least a year of development (while recent advances in large language models and AI-assisted coding may reduce some of the development burden, the overall complexity remains high), compared to empirical research projects, which experienced PhD students can often complete in about six months.

Moreover, systems are inherently more challenging to evaluate: their breadth and the sheer number of features make it difficult to design comprehensive studies that convincingly demonstrate the value of the individual features. Unlike interaction techniques, where the novelty and contribution can often be pinpointed and empirically validated through focused experiments, the innovations in a system may be distributed across many components, making it harder for reviewers to recognize and appreciate the core contributions. As a result, the novelty of such work is not always as immediately apparent, and the evaluation is less straightforward, which can make acceptance at top venues more difficult. These factors contribute to the perception — and reality — that publishing system work is a more arduous and uncertain process in HCI research.

Nevertheless, systems often address concrete, real-world problems and provide significant practical value. They also serve as a strong demonstration of a researcher’s broad skill set, integrating design, engineering, and problem-solving. This makes systems not only important for technological advancement, but also for showcasing the depth and breadth of innovation and real-world application.

6.1.2 Research Approaches of Interactive Systems

While the "be the best" and "be the first" distinction is quite useful for categorizing interaction technique research, it is less helpful when applied to system papers. This is largely because systems are inherently more complex to develop and are typically designed to address a particular, often multifaceted, real-world task. As a result, most system papers tend to share a similar structure: they identify a complex problem, analyze the limitations of existing solutions, and present a new system that addresses these challenges.

Although there are a few visionary system papers (such as CrossY ( Apitz & Guimbretière, 2004 ) or BumpTop ( Agarawala & Balakrishnan, 2006 )) that could be considered "be the first" by introducing fundamentally new ways of interacting, these are relatively rare. The vast majority of system research falls into the "be the best" category, focusing on improving how a specific task is accomplished. Moreover, because systems often integrate many features and components, the boundaries between "be the best" and "be the first" become blurred — innovations may be distributed across the system rather than concentrated in a single novel idea.

Attempting to strictly categorize system papers as "be the best" or "be the first" is therefore not particularly useful for several reasons: (1) the distribution is highly unbalanced, with most system papers falling into the "be the best" category and only a few qualifying as "be the first"; (2) the complexity of system development means that even within the same category, the way components are assembled and the structure of the paper can vary greatly depending on the specific context and goals. For these reasons, the "be the best"/"be the first" framework is less informative for system research than it is for interaction techniques.

When discussing the research approach for system papers, it is useful to first outline the typical components that such papers include. While there is a general structure that most system papers follow, the way these elements are assembled and emphasized often depends on the specific goals and context of the research. In the following, we summarize these common components and then provide examples to illustrate how different papers adapt and prioritize them to best present their contributions.

6.1.3 System Paper Common Components and Examples

Most system papers are motivated by dissatisfaction with how certain tasks are currently performed. This dissatisfaction can stem from several sources: the existing process may be overly manual and tedious (as in the case of sand animation, where traditional methods require labor-intensive work and researchers are inspired to create digital systems to streamline the process); the available tools may be general-purpose but not well-suited for the specific task (for example, while software like Adobe Premiere can adapt videos, it is not optimized for specialized video adaptation tasks, motivating systems like VidAdapter); or new paradigms may be introduced that fundamentally change how a task is approached (such as enabling direct manipulation of objects within a video for editing, rather than relying on traditional timeline-based interfaces).

A well-crafted system paper typically weaves together several key elements:

- A clear articulation of the task or vision at the heart of the work.

- A thoughtful analysis or study that reveal the limitations or challenges present in existing tools or approaches.

- A set of design goals/factors/features based on the analysis/study

- A detailed description and demonstration of the final front-end design (including interface and interaction considerations) and the system’s underlying architecture and technical implementation, showing how the core components and backend mechanisms address the identified challenges.

- When appropriate, a presentation of the iterative design process that led to the final system, including key prototypes, design alternatives, and formative evaluations. This should highlight what worked and what did not, demonstrating how user feedback and iterative testing informed and improved the design over time.

- Compelling evidence and validation — often through practical usage scenarios, user studies, or real-world deployments — demonstrating how the new system enables tasks that are improved, more efficient, or previously impossible.

- Insights or generalizable lessons that deepen our understanding and can inform the design of future interactive systems, contributing to the broader HCI community through empirical findings, theoretical analysis, or logical argument.

Given the complex nature of system research, it’s often challenging to comprehensively address every aspect within a single paper. Researchers must therefore strategically balance the inclusion of various elements, considering the project’s specific characteristics and scope. While these are common components found in many system papers, it’s important to recognize that the specific composition, emphasis, and strategy for presenting them can vary depending on the project’s characteristics and scope.

We now present three examples, demonstrating the varying composition strategies commonly employed in system research papers.

Comparing System Paper Structures: Three Examples

System papers in HCI often share a set of core components, but the way these components are arranged and emphasized can vary depending on the nature of the project. To illustrate this, the table below compares three representative system papers — Draco , Eyeditor , and PilotAR —highlighting which components are common across all, and which are distinct or emphasized differently. This side-by-side comparison makes it easier to see how paper structure adapts to the characteristics and contributions of each system.

|

Component / Section |

Draco ("Cool Demo") |

Eyeditor (Empirical Study) |

PilotAR (Iterative Design) |

|

Task or Vision |

✔️ |

✔️ |

✔️ |

|

Limitations of Existing Tools |

(Some, less focus) |

✔️ |

✔️ |

|

Formative Research |

✔️ |

✔️ |

✔️ |

|

Design Goals/Features |

✔️ |

✔️ |

✔️ |

|

Front-end Design & Architecture |

✔️ |

✔️ |

✔️ |

|

Justification for Design Choices |

(Demo as evidence) |

✔️ (Controlled study) |

(Via iteration/feedback) |

|

Iterative Design Process |

✖️ |

(Not emphasized) |

✔️ (Dedicated section) |

|

Empirical Study/Validation |

(Demo, user feedback) |

✔️ (Controlled experiment) |

✔️ (Case studies, SUS) |

|

Insights/Generalizable Lessons |

(Some, less focus) |

✔️ |

✔️ |

|

Presentation Strategy |

Cool demo |

Study Justified Design |

Design iteration |

Legend:

✔️ = Present and emphasized

✖️ = Not present

( ) = Present but less emphasized or in a different form

Discussion: How Structure Varies by Project

- Draco has a visually compelling demo, which helps communicate its novelty and value ( Kazi et al., 2014 ). In cases like this, the paper’s structure often becomes more streamlined: it motivates the work, describes the design and implementation, and then relies on the demonstration and user feedback as primary evidence. There is typically less emphasis on controlled studies or detailed iterative design documentation, since the demo itself can help justify the contribution.

- Eyeditor takes a different approach ( Ghosh et al., 2020 ). When the system’s value is not as immediately apparent through visual demonstration, authors choose to strengthen the paper by including a controlled experiment to justify key design decisions (such as the optimal text presentation mode). The structure still covers all the standard components, but adds a dedicated empirical study section to provide more rigorous evidence for the system’s advantages and design choices.

- PilotAR illustrates a pattern that can be useful for more complex systems with many interdependent features ( Janaka et al., 2024 ). Rather than isolating and empirically testing each design element, the paper devotes a section to the iterative design process, documenting how feedback and repeated refinement shaped the final system. This approach offers deeper insight into the design space and demonstrates rigor by being transparent about the system’s evolution.

Special "Visionary" System Papers

While we have examined three "be the best" type system papers above, here we explore two compelling examples of visionary "be the first" system papers: BumpTop ( Agarawala & Balakrishnan, 2006 ) and CrossY ( Apitz & Guimbretière, 2004 ). Both papers exemplify novel system-level approaches that fundamentally reimagined interaction paradigms. BumpTop introduced a revolutionary desktop interface using a 2.5D environment with piles as the organizing metaphor and pen-based interaction. Similarly, CrossY explored how a drawing application could be rebuilt around goal crossing as the primary interaction technique instead of traditional pointing.

A distinguishing feature of both papers is their compelling demonstration videos that effectively showcase practical implementations of their novel concepts. These demonstrations serve as powerful evidence, offering clear and engaging visualizations of their theoretical frameworks in action.

Given that both papers introduce multiple innovative concepts, their structures prioritize explaining these novel elements and demonstrating how they function within an integrated system. This approach helps readers understand not just individual components, but how they work together to create a cohesive user experience.

The papers share similar structural elements and page counts:

Design Goals

- CrossY : Dedicates ~1 page to "MOTIVATION AND DESIGN GOALS," outlining key aspects like expressiveness, fluid command composition, efficiency, and visual footprint

- BumpTop : Uses ~0.5 page for "DESIGN GOALS," covering realistic feel, physics control, paper-like icons, pen optimization, interface learnability, and smooth transitions

System Description and Interaction Techniques

- CrossY : Spends ~3 pages describing the application and interaction techniques, including command selection, document navigation, pen attributes, stroke operations, and implementation details

- BumpTop : Devotes ~5 pages to prototype and interaction techniques, covering core interactions, specialized features, visual search, pressure controls, and physics considerations

Evaluation Approach

- CrossY : Includes brief (~0.25 pages) informal user feedback from public demonstrations

- BumpTop : Contains ~1 page of qualitative user study results

Discussion and Insights

- CrossY : Features a detailed (~2.5 page) discussion examining implementation insights around expressiveness, discoverability, efficiency, and technical considerations

- BumpTop : Integrates insights into a concise ~0.5 page conclusion

Both papers prioritize detailed system descriptions over empirical validation, reflecting their focus on novel interaction paradigms. CrossY provides deeper reflection through its discussion section while BumpTop weaves insights throughout its conclusion. Each paper dedicates roughly 5-5.5 pages to explaining design and usage, highlighting these as key selling points. This approach works effectively because both systems feature compelling demonstration videos that speak for themselves - without such clear visual evidence, more extensive validation would likely be needed, though space constraints would make this challenging given the systems' complexity.

6.1.4 Challenges of writing system papers

Our analysis of the five system papers highlighted a key observation: there is no one-size-fits-all approach to their composition. The authors’ strategic decisions — shaped by elements like the inclusion of a compelling video or the demonstrable empirical justification of key system factors — resulted in varied paper structures. Yet, regardless of the format, the fundamental goal remained consistent: to effectively communicate the system’s contribution to the readers. The core expectation for a system paper is that it convincingly demonstrates either (1) new possibilities that were previously unattainable, or (2) clear advantages — such as efficiency, usability, or expressiveness — over existing approaches. Additionally, strong system papers strive to extract and communicate generalizable conceptual insights that can inform future research and design.

However, assembling a compelling system paper is rarely straightforward. Systems are inherently complex, often involving numerous design decisions, technical challenges, and usage scenarios. Authors must make strategic choices about which aspects to foreground and elaborate, balancing the need to provide sufficient technical detail with the imperative to tell a clear, persuasive story. This involves prioritizing the most novel or impactful contributions, carefully framing the limitations of prior work, and selecting evaluation methods that best support the paper’s claims. The narrative should guide reviewers and readers to appreciate not only what the system does, but why it matters and how it advances the field.

Ultimately, the art of writing a successful system paper lies in curating the wealth of possible details into a focused, coherent argument — one that highlights the system’s unique value, substantiates its claims with appropriate evidence, and distills broader lessons for the HCI community. This process is particularly challenging: system papers are inherently complex, and assembling a compelling narrative requires careful attention to best practices throughout both the system development and the writing process.

It is important to recognize that system papers often take significantly longer to complete than empirical HCI research papers. While an experienced team might finish an empirical study within six months, a robust system paper typically requires a year or more of sustained effort. Even with the assistance of modern AI coding tools, which can accelerate some aspects of implementation, the intricate design decisions and integration details still demand substantial time and expertise to get right.

Given these challenges, adhering to best practices is crucial. We will explore these practices in more detail later in this chapter. Before discussing best practices, let's introduce toolkit papers — another important type of constructive research in HCI.

6.2 Interactive Toolkits

6.2.1 Brief Overview

While interactive systems are constructive solutions designed to allow users to accompolish a complex task interactively using a computing solution, Toolkits , on the other hand, are software frameworks or libraries that provide reusable building blocks for creating interactive systems. Unlike systems, which has no clearcut defintion, toolkits has been defined by a number of papers. For example, paper Evaluation Strategies for HCI Toolkit Research ( Ledo et al., 2018 ). Toolkits can be thought of as platforms that help users create new interactive artifacts, simplify access to complex functionality, support rapid prototyping, and encourage creative exploration. They typically offer a programming or configuration environment made up of reusable building blocks or components, which users can combine in flexible ways to achieve their goals. Toolkits often provide features such as automation (for example, recognizing user gestures) or real-time data monitoring (such as visualization tools) to assist developers during the creation process. For instance, D3.js is a toolkit that empowers developers to create a wide variety of interactive data visualizations by providing a flexible set of tools and abstractions. Toolkits essentially serve as a bridge between low-level implementation details and high-level interaction design, allowing researchers and developers to focus more on innovation in interaction design rather than reinventing basic functionality.

Toolkits and systems share several important similarities: both are complex artifacts that require careful planning, substantial engineering effort, and often undergo multiple iterations before reaching maturity. Developing either a system or a toolkit typically demands a long-term commitment, as both must integrate diverse components, address technical challenges, and support robust, usable interaction.

However, their core purposes and target audiences differ. Interactive systems are primarily designed to solve real-world problems or accomplish specific tasks for end users. Their success is measured by how effectively they enable people to achieve concrete goals in practical contexts. In contrast, toolkits are created for designers, developers, or researchers — people who want to build new interactive solutions for particular classes of tasks. Rather than directly addressing end-user needs, toolkits empower others to create systems or applications by providing reusable building blocks, abstractions, and development environments.

The rationale for developing toolkits is better described by five core goals, rather than the "be the first" or "be the best" framework:

- Streamlining Creation and Reducing Complexity: Toolkits simplify the process of building interactive systems by packaging complex ideas into reusable modules, allowing users — even those without deep technical backgrounds — to develop new applications more quickly and easily ( Dey et al., 2001 ).

- Guiding Users Toward Effective Solutions: By establishing clear rules, abstractions, and workflows, toolkits help users follow productive paths and avoid common mistakes, making it easier to achieve successful outcomes ( Greenberg & Fitchett, 2001 ).

- Broadening Access to New User Groups: Toolkits lower technical barriers, enabling a wider range of people — including non-programmers, artists, and designers — to participate in creating interactive technologies. For instance, interface builders have made interface design accessible to new audiences ( Hartmann et al., 2006 ).

- Supporting Integration with Existing Tools and Standards: Toolkits are often designed to work seamlessly with current infrastructures and standards, which increases their utility and adoption. For example, D3 ( Bostock et al., 2011 ) gained popularity in part because it integrated well with widely used web standards.

- Fostering Reuse and Creative Exploration: By making it easy to replicate and extend ideas, toolkits encourage the development of new tools and solutions that can be combined in novel ways, enabling broader exploration and the creation of more powerful systems ( Myers, 1995 ).

Two illustrative examples of toolkit papers are Phidgets ( Greenberg & Fitchett, 2001 ) and D3 ( Bostock et al., 2011 ). Phidgets pioneered a new approach to physical user interface development by providing a modular hardware and software toolkit that dramatically lowered the barrier for prototyping tangible interfaces, enabling rapid experimentation and creative exploration. In contrast, D3 redefined the state of the art in data visualization toolkits by introducing a powerful, flexible architecture for binding data to web-based visual elements, offering both technical innovation and quantifiable improvements in expressiveness and performance over previous tools.

6.2.2 Research Approach for Interactive Toolkit

While toolkit development shares some common ground with system research, its component composition and research approach differ in important ways due to differences in target audience and contribution type. The key challenge lies in balancing between technical implementation details and ecosystem design — toolkits must provide both sufficient power/flexibility for experienced developers while also being accessible enough for their target user base.

A critical but often underestimated challenge is the identification and instrumentalization of common components shared across applications. Researchers must first develop a deep understanding of recurring patterns and abstractions within a domain, which typically requires hands-on experience building multiple applications before higher-level insights emerge. This pattern recognition and generalization process is far from trivial, often requiring researchers to cycle through several application development iterations before core abstractions crystallize into reusable toolkit components.

Developing an effective toolkit requires a combination of technical expertise and human-centered design thinking. Key skill areas include:

- Understanding developer needs and pain points through systematic analysis

- Strong software architecture design capabilities

- API design skills balancing flexibility with usability

- Domain knowledge to identify universal abstractions from recurring patterns

- Iterative prototyping to refine technical foundations

- Empathy for toolkit users and their workflow constraints

- Evaluative rigor to assess technical merit and utility across contexts

As discussed above, while toolkit papers offer exciting opportunities to showcase technical innovation, they present significant challenges that may render them less suitable as first projects for novice researchers. These challenges include extended development timelines, demanding software architecture and implementation skill requirements, and complex integration challenges.

However, the rewards can be transformative. Successful systems empower developers to create previously impossible applications while lowering barriers and expanding creative possibilities. When codified in papers, these systems create ripple effects as others build upon your work — adopting abstractions, extending architectures, and applying insights to new domains. There's particular satisfaction in seeing your solutions referenced as foundational work. The process deepens understanding of human-computer interaction fundamentals, combining technical innovation with academic discourse. This dual impact — enabling practical solutions while advancing knowledge — gives system/toolkit research unique potential to influence both industry and academia long-term.

In the next section, we examine concrete examples of successful system papers and toolkit implementations, analyzing how they balance these components to communicate their contributions effectively through detailed case studies.

6.2.3 Toolkit Paper Common Components and Examples

To identify common components and effective strategies for toolkit papers, we can analyze seminal works like Phidgets (2001) and D3 (2011). These papers establish reusable patterns through their combination of technical specificity, accessible walkthroughs, and principled validation. The structural comparison below synthesizes insights from both papers, highlighting key components that characterize successful toolkit papers:

Background Information The Phidgets paper ( Greenberg & Fitchett, 2001 ) introduces physical widgets ("phidgets") that function as building blocks for tangible interfaces, mirroring how GUI widgets abstract interface components. Phidgets encapsulate hardware complexity through a standardized API while providing both physical device control and virtual simulation capabilities. Key innovations include a connection management system for dynamic hardware detection and binding, a framework ensuring consistent software-physical object associations, and an evaluation demonstrating accelerated development cycles for physical interface prototyping. By enabling programmers to work with high-level abstractions regardless of physical hardware availability, the Phidgets toolkit significantly lowered barriers to exploring tangible interaction designs.

The D3 paper ( Bostock et al., 2011 ) presents a groundbreaking approach to web-based data visualization by introducing a declarative paradigm for directly manipulating document elements based on data. Unlike previous visualization toolkits that abstracted away the DOM, D3 embraces the web's native capabilities through a novel data-driven document model. At its core, D3 introduces a declarative syntax for binding datasets to DOM elements and applying dynamic transformations through data joins — maintaining synchronization between data and visual representations. This approach combines the benefits of direct DOM manipulation (for debugging and toolchain integration) with high-level visualization abstractions. The paper demonstrates how these innovations enabled richer visual expressiveness, easier debugging workflows, and performance improvements over prior tools like Protovis — particularly in animation and interaction scenarios. By aligning with web standards while exposing lower-level control, D3 empowered both designers and developers to create complex, interactive visualizations that were previously difficult to implement.

Comparison of the two papers

- Core Problem Identification

Both papers:

- Begin with concrete pain points in target domain ( Phidgets : hardware interfacing difficulties; D3 : DOM abstraction limitations)

-

Frame as accessibility issue

("average programmers" vs "web designers") - Position against prior approaches ( Phidgets vs custom one-off devices; D3 vs Protovis/jQuery)

- Architectural Approaches

Shared technical philosophy:

- Abstraction through encapsulation: Phidgets : Hardware -> COM objects; D3 : DOM manipulation -> Declarative operators

- Layered design: Phidgets : Physical device <-Wire<- Manager <- API; D3 : DOM <- Selections <- Data joins <- Modules

- Dual representation: Phidgets : Physical device + ActiveX control simulation; D3 : Direct DOM manipulation + Helper modules

- Evaluation Strategies

Common validation approaches:

- Novice usability studies: Phidgets : Student projects; D3 : Transition from Protovis users

- Performance metrics: Phidgets : Development time reduction; D3 : Frame rate benchmarks

- Rich example gallery: Phidgets : Flower bloom, Power Dimmer; D3 : Treemaps, Chord diagrams

Through analysis of the two toolkit examples presented in this section ( D3 and Phidgets ), several recurring components emerge as critical elements in successful toolkit papers. These components form a foundation for effectively communicating a toolkit's technical innovations while demonstrating its practical value to both developers and the research community. We elaborate on these recurring elements below:

Core structural elements shared across toolkit papers

- Motivation : ▪ Problem space analysis: Why the toolkit is needed ▪ Pain points analysis: What existing approaches lack ▪ Value proposition: What new capabilities become possible

- Architecture Blueprint : ▪ Visual system diagram showing key components ▪ Design philosophy explanation (How components collaborate) ▪ Technical innovation map (What makes it different/special)

-

Developer Journey

: ▪ Code tutorials: Step-by-step API walkthroughs ▪ Common patterns: Recipes for frequent use cases

▪ Debugging tips: Pitfalls to avoid

- Capability Spectrum : ▪ Example gallery from simple hello-world to complex deployments ▪ Showcase versatility through contrasting applications ▪ Exploration boundaries (What it does/doesn't handle well)

- Evaluation Grid : ▪ Performance benchmarks (How fast/efficient) ▪ Usability metrics (How easy to learn/use) ▪ Comparison matrix vs alternatives (When to choose which)

- Adoption Roadmap : ▪ Migration strategies from legacy systems ▪ Integration techniques with common workflows ▪ Maintenance and extension guidelines

Additional Considerations for Effective Toolkit Papers

Effective toolkit papers often employ strategic presentation techniques to demonstrate their value. Both analyzed papers utilize "before/after" comparisons to showcase their toolkit's benefits — Phidgets through hardware programming examples (Fig 7 VB demonstration) and D3 through visualizations comparing manual DOM manipulation to D3's declarative approach (Figs 3,7).

Both papers exemplify balanced self-assessment through frank discussion of limitations. The Phidgets paper acknowledges practical constraints including scarce documentation challenges and cost barriers for hardware-based mass production. Similarly, the D3 paper transparently addresses its steeper learning curve compared to predecessor Protovis and performance limitations when rendering complex SVG visualizations. This honesty strengthens credibility while helping readers make informed adoption decisions.

6.2.4 Evaluating Toolkits: Challenges & Strategies

Evaluating toolkits presents unique challenges that extend far beyond traditional system evaluation. While system evaluation inherently deals with technical complexity, toolkit assessment must account for multiple interdependent factors: the toolkit’s technical performance, its ability to simplify workflows for developers, its adaptability across unpredictable use cases, and its alignment with existing ecosystems. Unlike self-contained systems, toolkits are generative by design — their true value emerges through creative reuse, often in ways designers cannot anticipate. This dual mandate—requiring rigorous technical validation while also capturing the toolkit’s capacity to empower diverse users and inspire novel applications — creates an evaluation landscape where traditional usability metrics and performance benchmarks tell only part of the story. This is well discussed in the 2018 paper, Evaluation Strategies for HCI Toolkit Research ( Ledo et al., 2018 ) . We highlight some of the key points below.

|

Suggested Reading: " Evaluation Strategies for HCI Toolkit Research " |

Why Toolkit Evaluation is Difficult

- Generative Nature: Toolkits enable diverse applications beyond initial design intent, making comprehensive evaluation impossible

- Dual Focus Requirement: Must assess both technical capabilities and human-centered outcomes (usability/utility)

- Ecosystem Dependencies: Performance depends on hardware/software context (e.g., Phidgets' USB vs D3's browser compatibility)

- Temporal Factors: True impact often emerges post-publication through community adoption (e.g., D3's web standards alignment)

A key contribution of Ledo et al.'s work is the categorization of four primary evaluation strategies for toolkit research:

- Demonstration : Showcasing what can be built with the toolkit through example applications or case studies.

- Usage : Investigating who can use the toolkit and how, often through user studies, walkthroughs, or interviews with target audiences.

- Technical Performance : Assessing how well the toolkit works, typically via benchmarks, performance metrics, or stress tests.

- Heuristics : Applying expert reviews or heuristic evaluations to assess the toolkit's design and usability.

The paper emphasizes that the choice of evaluation strategy should be closely aligned with the claims and intended contributions of the toolkit. For example, a toolkit that claims to lower the barrier for novice users should include usage studies with representative participants, while a toolkit that claims technical superiority should provide rigorous performance benchmarks.

Critical Considerations

Researchers must align their evaluation methods with the toolkit's contribution type: technical innovations merit rigorous benchmarks (e.g., algorithmic performance metrics), while novel interaction paradigms require design space demonstrations through real-world examples. Ensuring ecological validity involves balancing controlled laboratory studies — exemplified by D3's code comparison experiments — with authentic deployment contexts like Phidgets' classroom implementations. Toolkit lifespan evaluation should consider temporal dimensions, assessing immediate usability metrics alongside longitudinal measures such as community adoption trends and patterns of derivative work.

Transparency remains critically important when communicating technical tradeoffs. Developers should clearly acknowledge optimization boundaries (as seen in D3's disclosed SVG rendering limits) and simulation constraints (like Phidgets' distinctions between ActiveX controls and physical device behaviors). A toolkit's infrastructure value emerges through its capacity to enable new research trajectories — the Proxemic Toolkit's facilitation of spatial interaction studies demonstrates this principle.

Effective evaluation combines multiple complementary strategies. D3's holistic approach weds technical benchmarks with real-world demonstration galleries and heuristic alignment to web standards, while Phidgets synergizes classroom usage studies with hardware performance metrics. This multidimensional validation strategy helps establish both technical efficacy and practical relevance.

6.3 The Ideal Workflow for System/Toolkit Development

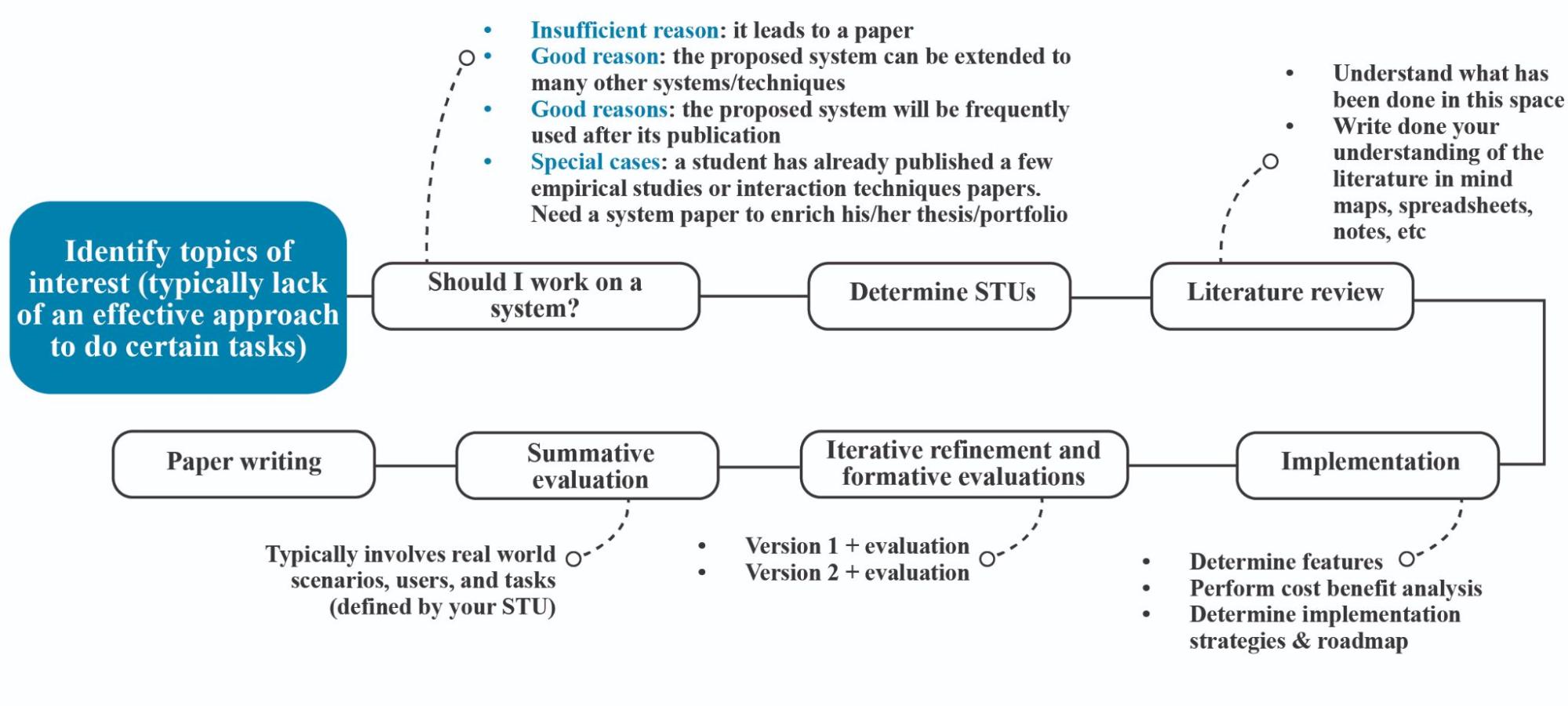

Having established a foundation for understanding both system and toolkit research, we now turn to developing an effective workflow for conducting such projects (See Figure 6.1 ). This structured approach becomes particularly crucial given the substantial investments required — projects of this scale demand rigorous planning and validation to justify their development costs. The following workflow balances methodological rigor with practical considerations, evolved through analysis of successful projects and common failure patterns in the field.

Figure 6.1: The “ideal” workflow of a system/toolkit project ( first or best )

Strategic Foundations

Identifying Interest Areas The journey begins by recognizing unmet needs and growth opportunities within the HCI landscape. Researchers should maintain a "problem journal" tracking observed challenges where current solutions prove inadequate through field observations, user interviews, or personal prototyping struggles. A promising direction emerges when these pain points reveal a pattern — recurring issues that existing tools fail to address, or novel interaction paradigms requiring new foundational infrastructure. For example, the development of ARKit stemmed from recognizing the convergence of mobile GPU capabilities, sensor improvements, and emerging augmented reality applications lacking robust development frameworks.

Assessing Development Costs and Long-Term Viability Undertaking system or toolkit development is akin to founding a startup — both require years of sustained investment before potentially yielding returns. A 2018 study of 50 HCI system papers revealed that 68% took over 18 months from conception to publication, with post-publication maintenance averaging 2.7 years for projects achieving sustained adoption ( Bansal & Khan, 2018 ). This longitudinal commitment demands careful forecasting through what-if scenarios:

- Longevity Analysis : Will this work remain relevant given current technology trajectories? An IoT toolkit targeting soon-to-be-deprecated wireless protocols might become obsolete before completion.

- Maintenance Mapping : Do you have institutional support and human resources beyond initial publication? The Polymaps geovisualization toolkit persisted through three PhD generations at its founding lab.

- Adoption Potential Assessment : What tangible advantages would drive adoption over "good enough" alternatives? D3.js overcame initial complexity concerns by enabling visualizations impossible with previous libraries.

- Cost-Benefit Validation : Could the desired outcomes be achieved through simpler means? Many failed toolkit projects attempted to "boil the ocean" rather than solve focused problems.

Teams should conduct a pre-mortem exercise early in the planning phase: envisioning potential failure scenarios and identifying preventable risks. Common pitfalls include underestimating documentation overhead (averaging 30% of development time for successful toolkits), overlooking dependency management, and misjudging target users' technical capacities.

Architectural Pivot Points

Defining Situations, Tasks, Users (STUs) This foundational step transforms vague aspirations into actionable specifications. Effective STU definition employs scenario-based design methods, creating detailed vignettes of real-world usage contexts. A medical AR toolkit might specify: "Surgeons (users) need intraoperative guidance (tasks) in resource-limited field hospitals where network connectivity fluctuates (situation)." These scenarios drive technical requirements like offline functionality and low-latency rendering. The STU framework acts as a compass throughout development — when evaluating feature requests, teams should assess alignment with originally specified situations, tasks, and user needs.

Contribution Validation Before writing a single line of code, researchers must validate their proposed system's unique value proposition. This involves three proof points:

- Capability Gap Analysis : Systematically compare existing solutions using feature matrices and capability models. A computer vision toolkit might benchmark API coverage across OpenCV, PyImageSearch, and commercial cloud services.

- Wizard of Oz Prototyping : Create "fake door" prototypes to test theoretical benefits. Early augmented reality researchers validated spatial UI concepts using masked smartphones years before ARCore's existence.

- Expert Stakeholder Reviews : Present concept papers to domain specialists. The Puppet Scripting toolkit refined its direction based on animation studio CTO feedback prior to implementation.

Implementation Strategy

Staged Development Approach Adopting a phased implementation strategy mitigates risk while maintaining momentum. The Eclipse IDE's successful evolution demonstrates this principle — initial releases focused on core Java tooling, with subsequent versions expanding through carefully vetted community contributions. Each stage should produce:

- Working prototypes answering key technical questions

- Validation checkpoints assessing usability and performance

- Architecture reviews ensuring extensibility

Iterative Refinement Cycle Real-world development resembles spiral more than waterfall models. The Unity game engine's evolution showcases effective iteration — early versions focused on macOS 3D support, but continual user feedback drove expansion to 25+ platforms. Researchers should plan for at least three major iterations:

- Proof-of-Concept : Validates core technical approach through stripped-down implementation

- Feature-Complete Beta : Implements all planned functionality for real-world testing

- Polish and Optimization : Addresses performance bottlenecks and edge cases revealed through adoption

Documentation must evolve alongside codebases. Successful projects like React Native maintain parallel tracks for API development and tutorial creation, recognizing that discoverability is as crucial as functionality.

Sustainability Planning

Adoption Lifecycle Management Transitioning from research project to widely used tool requires deliberate community building. The Computer Vision Annotation Tool (CVAT) grew through structured outreach:

- Early access programs with target user groups

- Hackathons showcasing integration capabilities

- Contributor guidelines fostering open-source participation

Maintenance Infrastructure Planning for longevity involves:

- Automated testing harnesses preventing regression

- CI/CD pipelines streamlining updates

- Versioning policies managing breaking changes

- Deprecation timelines for obsolete components

Projects like Apache's OpenOffice demonstrate the risks of neglecting these aspects — once dominant, its complex codebase and poor maintenance processes led to being supplanted by fork LibreOffice.

Dissemination Strategy**

Academic Documentation

Crafting the research paper requires balancing technical detail with narrative flow. Successful system papers employ:

- Architecture diagrams mapping technical innovation

- Usage timelines showing efficiency gains

- Comparative benchmarks quantifying improvements

- Failure analyses documenting lessons learned

Community Engagement Post-publication activities significantly impact longevity. The Processing toolkit sustained relevance through:

- Annual "Processing Foundations" conferences

- Educator fellowship programs

- Corporate sponsorship of development sprints

By viewing system/toolkit development as an ongoing process rather than one-off project, researchers can create artifacts that evolve with their fields while continuing to generate impactful scholarship.

6.4 Overall Guidelines for Constructive Research

Constructive research in HCI embodies a crucial translation process — transforming insights from user studies and theoretical knowledge into tangible systems that deliver real-world benefits. This unique value proposition requires researchers to balance technical implementation with knowledge generation. Simply documenting a technical solution proves insufficient; successful constructive research must clearly articulate novel contributions — what new capabilities emerge, why they matter, and how they advance the field beyond incremental improvements.

These projects typically progress through four phases of increasing complexity: technique innovation ("be the first"), methodological refinement ("be the best"), system development, and toolkit creation. Early-stage researchers often find technique innovation projects particularly rewarding as initial explorations, as they focus on developing novel computational methods for previously unaddressed problems - such as creating new sensing modalities for fundamental interactions like pointing. These bounded-scope projects establish core technical competencies while offering clear milestones for completion.

"Be the best" technique projects, while requiring more nuanced problem identification, offer structured pathways for optimization once researchers identify measurable improvement opportunities over existing techniques. Both these entry-level project types help build essential research muscles before tackling more complex challenges.

System development demands higher-order integration skills, typically requiring 12-18 months to combine multiple technical components into architectures meeting real-world requirements. Toolkit creation represents the pinnacle of complexity, combining system-level engineering with ecosystem design awareness. While these advanced project types prove invaluable for experienced researchers with strong engineering skills — particularly given their potential for broad community impact - they present steep learning curves in infrastructure design and maintenance strategies that often challenge early-career researchers.

Successful progression through these research tiers relies on rigorous validation at each stage. From controlled experiments measuring technical performance to longitudinal studies assessing real-world adoption, researchers must maintain methodological rigor through comparative benchmarking, ecologically valid user studies, and transparent reporting of limitations. As emphasized throughout Chapters 5 and 6 , the most impactful constructive research not only solves practical problems but also generates fundamental insights about human-computer interaction — requiring researchers to clearly communicate both their technical innovations and their conceptual contributions to the field.

References

Kazi, R. H., Chua, K. C., Zhao, S., Davis, R., & Low, K. L. (2011, May). SandCanvas: A multi-touch art medium inspired by sand animation. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (pp. 1283-1292).

Kazi, R. H., Chevalier, F., Grossman, T., Zhao, S., & Fitzmaurice, G. (2014, April). Draco: bringing life to illustrations with kinetic textures. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (pp. 351-360).

Ram, A., Xiao, H., Zhao, S., & Fu, C. W. (2023). VidAdapter: Adapting Blackboard-Style Videos for Ubiquitous Viewing. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies, 7(3), 1-19.

Dixon, M., & Fogarty, J. (2010, April). Prefab: implementing advanced behaviors using pixel-based reverse engineering of interface structure. In Proceedings of the SIGCHI Conference on Human Factors in Computing Systems (pp. 1525-1534).

Agarawala, A., & Balakrishnan, R. (2006, April). Keepin'it real: pushing the desktop metaphor with physics, piles and the pen. In Proceedings of the SIGCHI conference on Human Factors in computing systems (pp. 1283-1292).

Apitz, G., & Guimbretière, F. (2004, October). CrossY: a crossing-based drawing application. In Proceedings of the 17th annual ACM symposium on User interface software and technology (pp. 3-12).

Nebeling, M. (2017, May). Playing the Tricky Game of Toolkits Research. In workshop on HCI. Tools at CHI.

Ghosh, D., Foong, P. S., Zhao, S., Liu, C., Janaka, N., & Erusu, V. (2020, April). Eyeditor: Towards on-the-go heads-up text editing using voice and manual input. In Proceedings of the 2020 CHI Conference on Human Factors in Computing Systems (pp. 1-13).

Janaka, N., Cai, R., Ram, A., Zhu, L., Zhao, S., & Yong, K. Q. (2024). Pilotar: Streamlining pilot studies with ohmds from concept to insight. Proceedings of the ACM on Interactive, Mobile, Wearable and Ubiquitous Technologies, 8(3), 1-35.

Ledo, D., Houben, S., Vermeulen, J., Marquardt, N., Oehlberg, L., & Greenberg, S. (2018, April). Evaluation strategies for HCI toolkit research. In Proceedings of the 2018 CHI conference on human factors in computing systems (pp. 1-17).

Dey, A. K., Abowd, G. D., & Salber, D. (2001). A conceptual framework and a toolkit for supporting the rapid prototyping of context-aware applications. Human–Computer Interaction, 16(2-4), 97-166.

Greenberg, S., & Fitchett, C. (2001, November). Phidgets: easy development of physical interfaces through physical widgets. In Proceedings of the 14th annual ACM symposium on User interface software and technology (pp. 209-218).

Hartmann, B., Klemmer, S. R., Bernstein, M., Abdulla, L., Burr, B., Robinson-Mosher, A., & Gee, J. (2006, October). Reflective physical prototyping through integrated design, test, and analysis. In Proceedings of the 19th annual ACM symposium on User interface software and technology (pp. 299-308).

Bostock, M., Ogievetsky, V., & Heer, J. (2011). D³ data-driven documents. IEEE transactions on visualization and computer graphics, 17(12), 2301-2309.

Myers, B. A. (1995). User interface software tools. ACM Transactions on Computer-Human Interaction (TOCHI), 2(1), 64-103.

Bansal, H., & Khan, R. (2018). A review paper on human computer interaction. International Journal of Advanced Research in Computer Science and Software Engineering, 8(4), 53.