Chapter 3: Introduction to Empirical Research and its Overall Workflow

In the previous chapter, we explored what constitutes valuable knowledge contributions within HCI research and provided an overview of the diverse types of contributions that researchers can make. Building upon that foundation, this chapter will now dive deeper into one specific and highly prevalent type of contribution: empirical research. We will examine its core principles, methodologies, and the overall workflow involved in conducting rigorous empirical studies.

3.1 What is empirical research, and why conduct it?

Empirical research is a foundational method in HCI, characterized by its systematic approach to collecting and analyzing data through observation, experimentation, or measurement. Its primary aim is to understand, explain, or predict human behavior and interactions with technology.

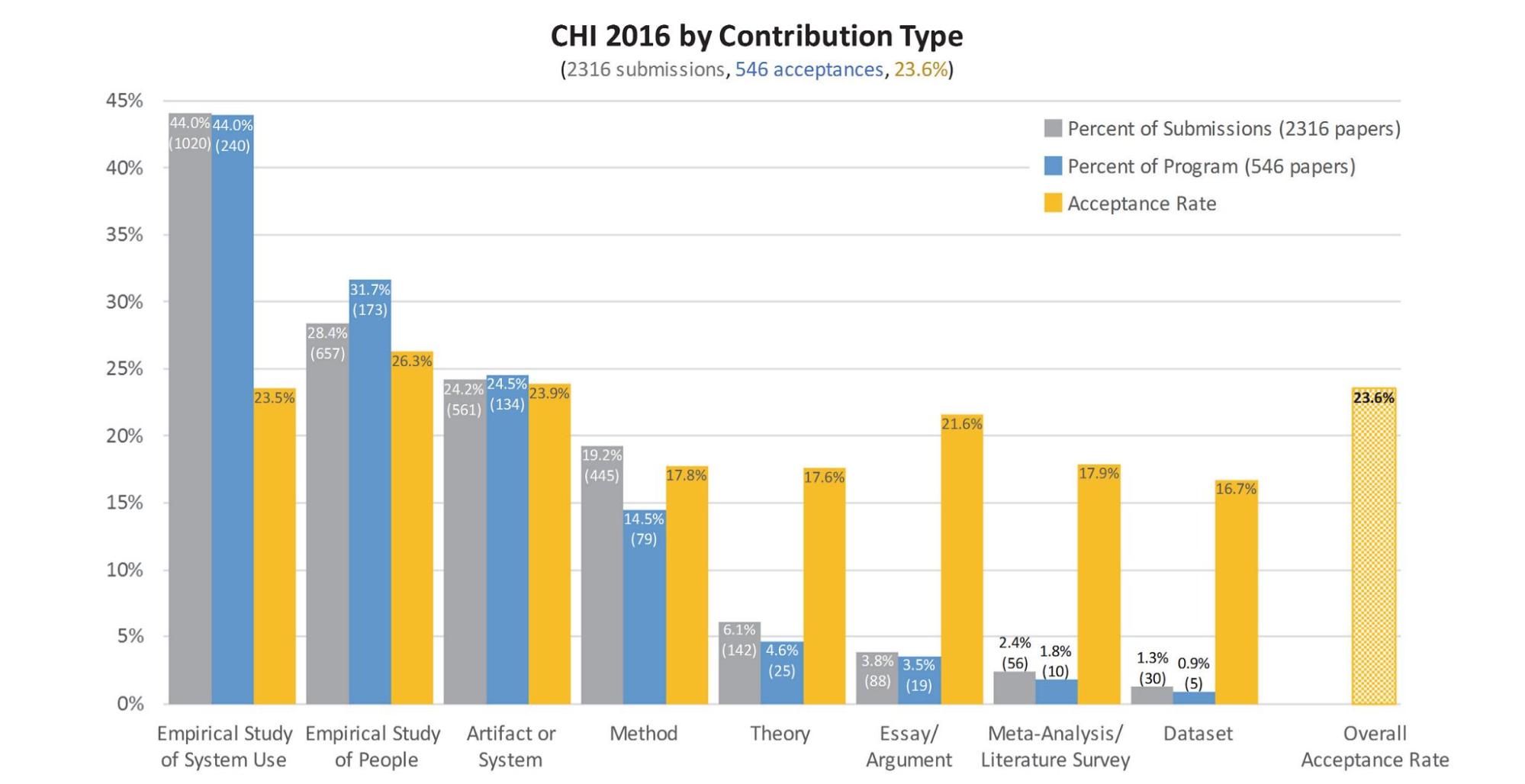

This method serves as the bedrock of HCI because the field strives to contribute new, validated , and useful knowledge. Empirical research, deeply rooted in the scientific method, provides the crucial means for this validation. While students and researchers alike may find artifact-centric (constructive) contributions particularly exciting, it is through empirical investigation that we can rigorously demonstrate why a novel solution is genuinely useful or how it compares favorably against existing alternatives. Without this systematic gathering of evidence-based insights into effectiveness and usability, the true value of a proposed system or design remains unsubstantiated. Consequently, most proposed solutions must ultimately be evaluated with users through empirical methods, making such studies a cornerstone of scholarly contributions at leading HCI conferences like CHI (see Figure 3.1 from Wobbrock & Kientz, 2016 ).

Figure 3.1: Paper Type Distribution at CHI 2016. This chart illustrates the overwhelming predominance of empirical studies which focus on the usage of systems and human behaviors, accounting for over 70% of all submissions.

Looking back at the three problem types in HCI discussed in Chapter 2 (Empirical, Conceptual, and Constructive), empirical research is not only a standalone category but is also fundamentally integrated into the other types. For conceptual research, a common pattern involves:

Observation [empirical] -> generalizable insights -> validate insights in multiple contexts [empirical]

Similarly, constructive research often follows a path where empirical work is key:

Observation [empirical] -> insights -> design -> implementation -> validate effectiveness [empirical]

These patterns demonstrate how empirical methods serve a dual role: they are foundational for generating initial insights and act as an essential validation mechanism across all types of HCI research, although other research approaches are also viable.

3.2 The 2 subtypes of Empirical Studies

As previously described, our approach consolidates the three subtypes of empirical contribution into two distinct subtypes for greater simplicity: (1) unknown phenomena, and (2) unknown factors and effects. Each subtype is defined by its specific focal points and objectives, streamlining the exploration and analysis within empirical studies. Let’s dive into Subtype 1 and Subtype 2.

Subtype 1: Exploring Phenomena in a Holistic Manner

- This approach involves broadly studying a phenomenon within HCI to understand its general patterns and implications. For this type of research to be impactful, it must focus on phenomena that capture the attention and interest of the HCI community.

- While there's no universal formula for what makes a phenomenon "interesting" , successful papers in this category typically study phenomena that exhibit several key characteristics:

- Timeliness — The phenomenon represents emerging practices or contexts that warrant investigation (e.g., hackathons as new forms of collaborative work)

- Societal Impact — The phenomenon can be impactful in different ways: through broad reach affecting many users, or by addressing critical needs of specific underserved communities. Both approaches — and others — can demonstrate meaningful societal contributions.

- Technological Disruption — It examines how new technological platforms are reshaping work and social practices (e.g., gig economy platforms' impact on freelancers)

- Research Potential — It offers opportunities to develop theoretical frameworks and design implications (e.g., understanding participation patterns in hackathons)

When writing about such phenomena, researchers must clearly articulate why their chosen phenomenon warrants attention from both the HCI community and society at large. This often involves highlighting the scale of impact, identifying affected stakeholders, and demonstrating gaps in current understanding.

|

Example papers:

|

Subtype 2: Analyzing Specific Factors and Their Effects

- While Subtype 1 explores broad phenomena, Subtype 2 emerges from the specific questions and hypotheses that arise from that exploration. Subtype 2 studies isolate and examine particular factors, mechanisms, or relationships within a phenomenon, allowing researchers to establish causal links and understand how different variables interact. This focused investigation is essential for building actionable knowledge that can directly inform the design of interactive techniques or systems.

- The concept of "interesting" research questions is paramount in this subtype of empirical studies. Drawing from decades of HCI research, questions become compelling when they: (1) address fundamental interaction challenges that persist across technologies and contexts (e.g., input accuracy, cognitive load, social dynamics); (2) challenge existing assumptions or reveal counterintuitive relationships; (3) bridge theoretical understanding with practical implications for design; (4) tackle emerging challenges brought by new technologies or user behaviors; and (5) offer insights that could generalize beyond the specific context being studied. The most impactful work often combines multiple of these aspects — for instance, examining how established interaction principles manifest differently in novel technological contexts, or investigating how theoretical frameworks from other disciplines might inform our understanding of persistent HCI challenges.

|

Example papers:

|

3.3 A formula and best practice to successful empirical research

Successful empirical research hinges on two key elements:

- Interesting results/insights

- Rigorous methodology/data support

These elements transform into a two-stage research process:

★ Stage 1: Finding "Interesting" Insights

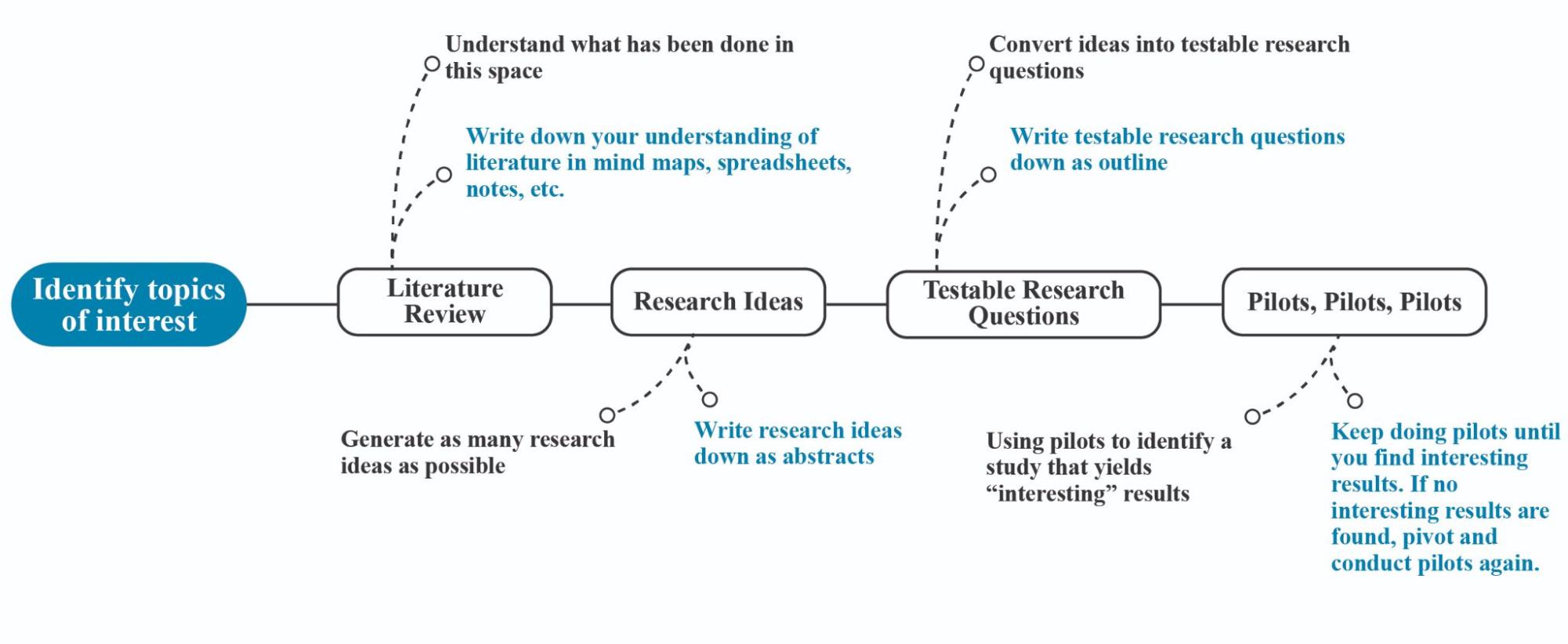

- Identifying compelling phenomena, effects, or relationships that warrant investigation ( see Figure 3.2 )

★ Stage 2: Ensuring Methodological Rigor

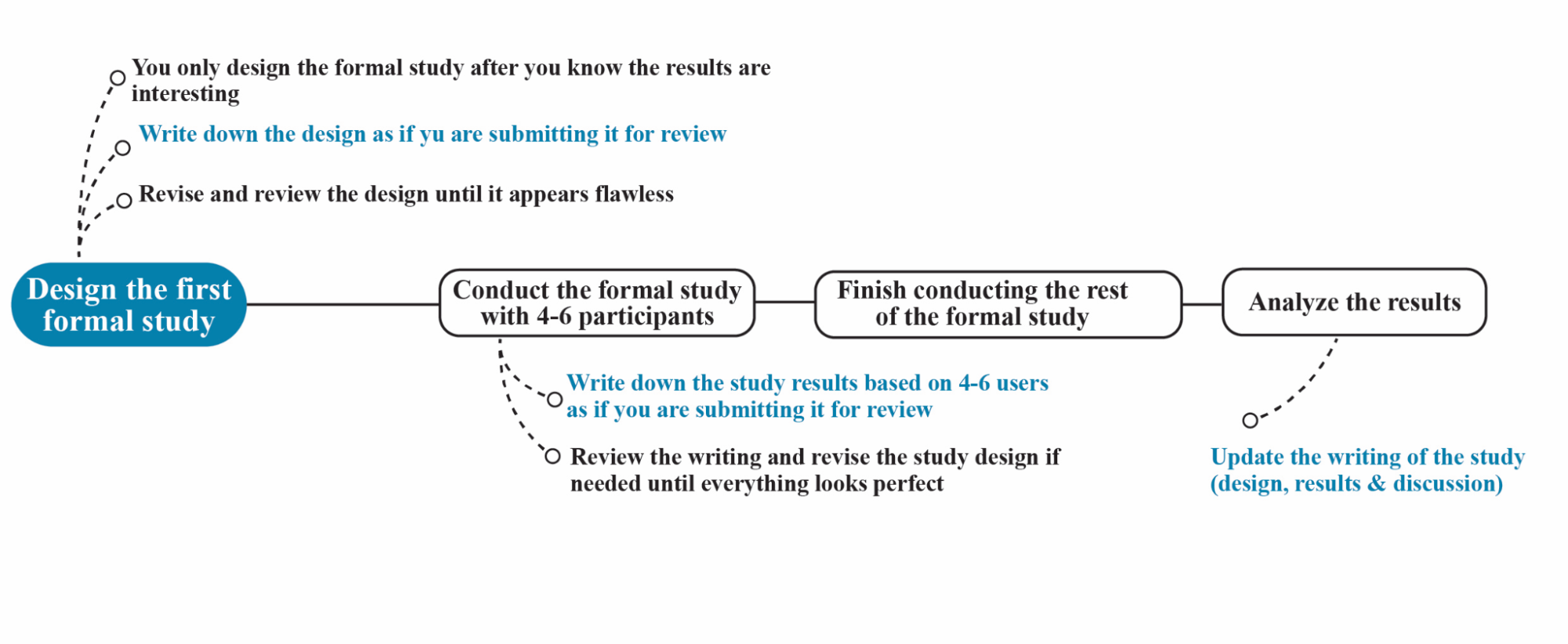

- Systematic investigation with proper research methods ( see Figure 3.3 )

The manifestation of these stages differs between the two empirical subtypes:

3.3.1 Stage 1: Identifying Interesting Insights

For Subtype 1 (Phenomenon Studies), Stage 1 focuses on identifying and validating an interesting phenomenon to study. This process begins with selecting emerging and timely phenomena that capture attention in the HCI community. Researchers should carefully scan the landscape for novel technological practices, social behaviors, or interaction patterns that have not been extensively studied in prior literature.

The phenomenon must demonstrate meaningful societal impact or address critical needs of specific user groups. This could manifest through broad reach affecting many users, or by tackling important challenges faced by underserved communities. Through preliminary observations and interviews, researchers map out key activities and interactions within the phenomenon to understand its scope and characteristics.

When evaluating a phenomenon's research potential, it's crucial to identify aspects that connect to core HCI interests. This includes examining how the phenomenon reveals new patterns of human-computer interaction, challenges existing behavioral theories, suggests implications for future system design, or offers insights that could generalize beyond the specific context. These connections help position the work within the broader HCI research landscape.

Before committing to a full study, researchers should validate the phenomenon's research potential through pilot investigations. This initial phase involves conducting preliminary studies, such as gathering a few interview results or performing initial field investigations. The insights from these activities, along with relevant publications, are then used to construct an outline of the potential paper. This outline is a critical step to validate whether there are enough interesting insights to justify a full paper. For Subtype 1 (Unknown Phenomenon), the 'Discussion' section of this outline is particularly important, as this is the heart and soul of a phenomenon-type paper; it is where the key conceptual contributions and the overall narrative are defined. Only after researchers have a clear idea about the key conceptual contributions are clearly articulated in this outline should they move to Stage 2 (Ensuring Methodological Rigor). These pilot investigations also serve the practical purpose of confirming that the phenomenon is observable and analyzable using available research methods, which is essential for the successful execution of the research project.

Stage 1 for factor and effects studies centers on discovering effects that are both interesting and impactful within HCI research. For a finding to be truly 'interesting' and valuable to the research community, it often needs to offer more than simple confirmation of existing intuitions. Consider, for example, an investigation into how text presentation affects learning. A common assumption, perhaps inspired by educational videos like those from Khan Academy, might be that dynamically revealing text letter by letter enhances engagement and improves learning outcomes. If empirical results align with this intuitive belief, they confirm common understanding but may not offer significant new insights to the community.

However, if pilot investigations suggest a counterintuitive outcome — for instance, that dynamically drawn text hinders learning compared to presenting text all at once — this becomes a much more compelling avenue for research. Such an unexpected result, if rigorously validated and accompanied by a plausible explanation for why it occurs, can provide genuinely useful knowledge. In this scenario, the new insight could significantly influence how designers approach textual presentation to optimize learning.

Identifying genuinely 'interesting' insights — effects that are non-obvious, surprising, or challenge conventional wisdom — is a primary goal at this stage, yet it's often challenging. Many initial ideas, when explored, might only confirm common beliefs, leading to results that are not particularly novel. Investing in a full-scale study only to find such predictable outcomes would not be very cost effective. This is where pilot studies play a critical role. Because they are low-cost and quick to conduct, researchers can test numerous hypotheses. The strategy is to iterate through many such pilot studies, accepting that most might yield uninteresting results, until a truly surprising or counterintuitive effect is identified. Only then, when an 'interesting' lead is found, does it become justifiable to commit to a more resource-intensive Stage 2 full study.

One might wonder how reliable findings from pilot studies with small sample sizes (e.g., 4-6 participants) can be. While a pilot study cannot guarantee the statistical validity or 'truth' of an effect, its value is significant. Firstly, strong and impactful effects often become evident even with a small number of participants. If a novel input technique offers genuine advantages, or if certain interface factors have meaningful psychological or behavioral impacts, these should be observable even in preliminary testing. More crucially, pilot studies allow researchers to delve into the reasons behind observed differences, often through methods like participant interviews. This qualitative understanding helps distinguish effects that might be due to chance from those grounded in fundamental reasons. Thus, by conducting pilot studies and intelligently analyzing their results, researchers are more likely to discover genuinely interesting effects that warrant deeper, more rigorous investigation.

Identifying an interesting effect is only the initial step in Stage 1. The crucial next step is to understand why this effect occurs and to develop a compelling, logical explanation. This underlying rationale is often a significant finding in itself, particularly if it illuminates fundamental aspects of human-computer interaction. Such explanations should ideally be grounded in established theories of human cognition, perception, or behavior, or derived from systematic analytical approaches (e.g., a detailed task analysis like KLM ( Hinckley et al., 2006 ) ). At a minimum, the reasoning must be logically sound.

To uncover these explanations, researchers must actively investigate the 'why' behind an observed effect. When a pilot study reveals a potentially interesting outcome, interviewing participants is a valuable method to understand their experiences and perceived reasons for their actions or preferences. However, participant insights alone may not suffice. It is critically important for researchers to also immerse themselves in the pilot study conditions, experiencing the tasks or interfaces firsthand. This direct engagement can provide an intuitive 'feel' for why one condition might differ from another, offering insights that participants might not articulate or even be consciously aware of. This self-experimentation helps distinguish robust, explainable phenomena from coincidental observations.

The most valuable research directions emerge when observed effects not only reproduce reliably but are also accompanied by a strong explanatory framework that connects them to broader principles of interaction design or HCI theory. For instance, discovering that users consistently violate an established design guideline under specific conditions becomes far more impactful when researchers can articulate why this violation occurs, potentially revealing limitations in our current understanding or the guideline itself. Ultimately, the goal at this stage is not to achieve statistical significance, but to identify robust effects that are explainable, theoretically or logically grounded, and have clear implications for advancing HCI research and practice.

Figure 3.2: Roadmap for identifying and refining research topics.

Common Challenges in Stage 1 and Strategies to Address Them:

Navigating Stage 1 of empirical research effectively involves overcoming common hurdles. Beginner researchers often struggle with generating interesting ideas, managing their time efficiently, and dealing with the inherent frustrations of the exploratory process. Addressing these challenges systematically can lead to more fruitful outcomes.

- "I have tried many ideas, but none seem to be interesting."

This common frustration can often be addressed by a multifaceted approach. Firstly, a robust Literature Review is indispensable. Thoroughly understanding prior work allows researchers to build upon existing knowledge rather than reinventing the wheel, identify genuine gaps where new contributions can be made, recognize emerging trends that might signal fertile research areas, and connect their nascent ideas to established theoretical frameworks, thereby grounding them in existing scholarly discourse.

Secondly, it's crucial to develop a nuanced Understanding of 'interesting' in HCI . The field typically values research that pushes boundaries by challenging existing paradigms or assumptions, addresses significant and demonstrable user needs, offers novel solutions to complex interaction problems, or provides compelling empirical insights that deepen our understanding of how humans interact with technology.

A critical distinction must also be made between Engineering vs. Research Problems . Engineering problems are often solution-focused, aiming to fix a specific issue in a particular context. In contrast, research problems seek to generate generalizable knowledge about interaction, asking broader questions that can inform theory and practice beyond a single application. Focusing on the latter is key to developing impactful research.

Specific strategies can then be applied depending on the study subtype. For Phenomenon Studies , researchers should focus on emerging technologies or novel user behaviors that are not yet well understood. It's important to look for phenomena with broader societal implications, as this often signals significance. Ensuring practical access to the relevant population for study is a logistical necessity, and initial ideas should be validated through preliminary data collection, such as a few exploratory interviews or observations, to confirm the phenomenon's research-worthiness before committing extensive resources.

For Factor/Effect Studies , a productive strategy involves systematically mapping the design space of interest to identify potential variables and their interactions. Researchers might test extreme parameters or conditions where effects are more likely to be pronounced or surprising. Employing rapid prototyping techniques allows for quick iteration and testing of different design variations, while building on established theoretical frameworks can guide the selection of factors and the formulation of hypotheses about their effects.

- "I waste too much time on things that are not necessary at this stage."

Efficiency in Stage 1 hinges on maintaining a sharp focus on the primary goal: determining whether an idea has the potential to yield interesting results. This means prioritizing activities that directly contribute to this assessment and deferring those that are premature.

For Phenomenon Studies , the emphasis should be on establishing the significance and research-worthiness of the phenomenon before launching into a detailed, comprehensive investigation. This often involves using rapid, lightweight methods initially, such as quick environmental scans, informal discussions with potential users, or analysis of publicly available data. The aim is to gather just enough minimal validation data to make an informed decision about whether to proceed, rather than attempting to collect exhaustive data at this early stage.

Similarly, for Factor/Effect Studies , the priority is to quickly identify clear and potentially impactful effects. Techniques like Wizard of Oz, where a human simulates system functionality, can be invaluable for testing interaction concepts without building fully functional prototypes. The focus should be squarely on finding these clear effects and understanding their potential implications, while consciously avoiding premature optimization of the interface, system performance, or experimental design, as these can consume significant time and effort for ideas that may ultimately prove uninteresting.

- "I'm feeling impatient and frustrated with no interesting results."

The exploratory nature of Stage 1 can indeed be a source of impatience and frustration, especially when interesting results are not immediately forthcoming. Several strategies can help manage these feelings and maintain momentum.

Firstly, seeking expert guidance from mentors, supervisors, or senior colleagues can be immensely beneficial. Experienced researchers can offer fresh perspectives, help troubleshoot conceptual roadblocks, suggest alternative approaches, or validate whether an idea has merit. Their insights can often help reframe a problem or identify overlooked opportunities.

Secondly, it is important to be flexible and consider alternative directions if an initial line of inquiry proves unfruitful after a reasonable period of exploration. Research is rarely a linear process, and the willingness to pivot or abandon unpromising ideas is a hallmark of an effective researcher.

Finally, a practical strategy to mitigate frustration and increase the probability of success is to maintain multiple parallel investigations . By exploring several potential ideas or hypotheses concurrently, researchers are less dependent on any single idea panning out. This approach not only diversifies risk but also ensures that progress can be made on other fronts if one avenue stalls, thereby fostering a more continuous sense of advancement.

3.3.2 Stage 2: Applying Rigorous Methodology

Before transitioning to Stage 2, it is crucial to ensure that sufficient conceptual contributions have been identified in Stage 1. This means the core conceptual elements of your research paper should be largely established. Stage 2 then focuses on applying solid, rigorous methodology to validate and ensure the trustworthiness of these Stage 1 findings.

Determining whether there are 'enough contributions' can be challenging, especially for beginner researchers. Therefore, it is essential to consult with your research mentor . Only proceed to Stage 2 if your mentor confirms that the conceptual contributions from your Stage 1 investigation are substantial enough.

In Stage 2, the specific rigorous methodology employed will depend on the type of empirical research you are conducting.

For Phenomenon-based research (Subtype 1) , you will likely rely on methods such as ethnographic studies, field studies, and interview studies. The key activities include:

- Design comprehensive documentation studies

- Collect rigorous data through ethnography, interviews, or field studies

- Analyze systematically to extract patterns

- Validate through triangulation and member checking

For Factor/Effect research (Subtype 2) , the design of controlled studies is paramount. The key activities include:

- Design formal controlled experiments

- Collect comprehensive data to:

- Identify mechanisms and factors

- Understand edge cases

- Apply statistical analyses to:

- Model variable relationships

- Quantify effect sizes

- Ensure reproducibility through:

- Detailed documentation

- Shared code and data

- Cross-context validation

- Generate insights via:

- Qualitative analysis

- Multiple data source triangulation

- Theoretical refinement

- Connect to broader implications for:

- Design guidelines

- Theoretical frameworks

- Future research

Ensuring methodological rigor in Stage 2 is paramount. This involves several critical considerations, akin to meticulously planning and documenting an empirical study:

- Alignment with Research Objectives: The foundation of a rigorous methodology is its direct alignment with the research questions and goals. Every chosen method and procedural step must clearly serve the purpose of addressing these objectives. For instance, if your goal is to understand user behavior in a specific context, your chosen data collection method (e.g., observation, interviews) must be well-suited for capturing that behavior.

- Justification of Methodological Choices: Each component of your methodology — from participant selection and task design to data collection techniques and analysis plans — requires explicit justification. This rationale should be grounded in established research practices (supported by citations to relevant literature) or, for novel approaches, clear logical reasoning demonstrating its appropriateness.

- Detailed Articulation of the Study Protocol: Especially for complex empirical studies, rigor demands a detailed, step-by-step articulation of the entire research design. This often takes the form of a study protocol, outlining how variables will be operationalized, the precise procedures for data collection, and the intended methods for data analysis.

- Proactive Identification and Mitigation of Flaws: A rigorous design anticipates potential threats to the study's validity. This includes foreseeing and planning to control for confounding variables, minimizing potential biases, and selecting appropriate statistical analyses to avoid errors. The anticipated results should also flow logically from the study design and the theoretical underpinnings of your research.

Achieving this level of rigor can seem intimidating, particularly for beginner researchers. However, it's crucial to understand that crafting a robust methodology is an iterative process, not something achieved perfectly on the first attempt. Don't be afraid if your initial ideas have gaps; this is a natural part of research. The key is patience and a willingness to refine.

A highly effective, and indeed critical, strategy is to write down your complete study plan in detail, as if it were the methods section of your final research paper, before you conduct the full study. This act of writing itself is a powerful tool for identifying potential logical inconsistencies, overlooked variables, or procedural flaws. Furthermore, this detailed written plan becomes an invaluable document that you can share with your mentor, peers, or experienced colleagues to solicit critical feedback.

Remember, fixing a methodological flaw after you have prepared everything for a study, and especially after you have begun testing participants, is incredibly expensive. If issues are discovered mid-study, it often means that the data collected from those initial participants may no longer be valid for your main analysis and might, at best, be treated as pilot data. Thus, this pre-data collection review, facilitated by a thoroughly written plan, is crucial for identifying and rectifying problems before you invest significant time and resources, significantly strengthening your study's rigor. Embrace this cycle of meticulous planning through writing, seeking feedback, and iterative revision; it's a cornerstone of developing sound and publishable research.

Figure 3.3: Roadmap for designing the first formal study rigorously.

During the study's execution, even after the initial plan receives mentor approval, continuous vigilance and an iterative approach remain essential.

Firstly, anticipate and address emergent issues effectively. Flaws, unexpected results, or bugs can surface at any point, particularly in the early stages. Therefore, conduct periodic reviews, especially as you begin the study. Proactively check and validate procedures and initial data. It is crucial to address potential errors promptly and only proceed with broader data collection (e.g., recruiting the full set of participants) once you are confident that your methods are sound and the initial phase of the study is running smoothly.

Secondly, maintain meticulous documentation while actively pursuing insights. Meticulously document all procedures, observations, and findings. Crucially, remember that insights are what you are seeking. These can emerge from ongoing study observations and participant interactions, so be prepared to update your research outline or writing as results are obtained. If you find that initial results are not yielding sufficiently interesting insights, you may need to adjust the study to find a more fruitful angle to investigate the problem.

Throughout this dynamic process, continue to solicit feedback from mentors. A study that is thus well-defined, iteratively refined, meticulously executed, and yields results consistent with well-grounded expectations is one that generally meets the standards for publication.

Let's dive into a real-world example to see how these research stages can play out, and importantly, what happens when the ideal path takes a few detours. We'll look at the paper "Not All Spacings are Created Equal" ( Zhou et al., 2023 ) an example of Subtype 2 research (unknown factor/effect) that explored a seemingly simple question with significant implications: how does text spacing impact our ability to read on optical see-through head-mounted displays (OHMDs) while staying aware of our surroundings?

Stage 1: The Spark of an Idea – Initial Insight Development

The journey for this specific research didn't start with an isolated observation about OHMDs, but rather evolved from an earlier investigation by the team. In that prior study, they explored how to effectively lay out video information for on-the-go viewing with smart-glasses. A particularly interesting observation arose from considering scenarios akin to an educational video where a diagram is annotated with multiple text labels. The team found that when such textual labels were displayed — for instance, to explain different parts of a visual figure — users expressed a strong preference for ample spacing between the individual labels. This preference wasn't merely for the clarity of each label in isolation, but more crucially, because larger gaps allowed them to better see the underlying diagram or, in the broader context of smart-glasses, more of their physical environment that the labels might be overlaying or adjacent to. The insight gleaned was that these gaps were not just empty space, but functional, making it easier for users to observe their surroundings while simultaneously processing information on the display.

This insight directly led to an open, previously unexplored research question pertinent to OHMDs: how should text spacing be configured when reading on such devices during multitasking situations? While previous approaches to text display on OHMDs had primarily focused on where the text should be displayed (e.g., a particular location in the viewport), the internal spacing of the text itself — between words and lines — typically defaulted to standard settings. The new question was whether these default text spacings were still optimal in the demanding dual-task context of OHMD usage. Building on this, the team had a hunch: what if tweaking the spacing between words and lines could make it easier to absorb information from the display and keep an eye on what's around you? This was their core hypothesis — a potential breakthrough for OHMD usability.

But a hunch isn't enough. To see if they were onto something, they didn't immediately launch into massive, complex experiments. Instead, they embraced the spirit of Stage 1 validation with quick, nimble pilot studies:

- They informally tested various text layouts with a handful of participants (3-4 people) who were literally walking around — a scenario mimicking real-world OHMD use.

- Intriguingly, they noticed that certain spacing configurations appeared to boost both reading speed and the participants' awareness of their environment. These weren't definitive proofs yet, but they were strong, encouraging signals.

The team knew they had struck a promising vein when three things aligned:

- Consistent User Benefit: Participants weren't just anecdotally preferring some layouts; they were performing better with specific spacing.

- Plausible Explanation: They could articulate a logical reason why these changes might be working — the new layouts seemed to help users distribute their visual attention more effectively between the text and the world.

- Subjective Confirmation: When the researchers tried it themselves, their own experiences mirrored their hypotheses and the pilot participants' feedback. This alignment of observation, theory, and personal experience gave them the confidence to dig deeper.

This initial exploration, while sounding straightforward, was a substantial undertaking in itself, spanning nearly six months. The extended duration wasn't due to a lack of focus, but rather the inherent complexity of navigating uncharted territory. There were numerous subtle details and a wide array of potential configurations to test, even in these early pilot phases. Furthermore, the research was spearheaded by a researcher who was relatively new to this specific domain of HCI methodology. A significant portion of this time involved her learning and refining the research methods concurrently with conducting the pilot studies. This learning curve, natural for a beginner researcher, meant the process wasn't always optimally efficient.

Stage 2: The Path to Proof — Systematic Investigation (with a Twist)

With their initial insight showing promise, the team was ready for the rigor of Stage 2: systematic investigation. They designed a series of three formal studies to dissect the phenomenon. However, this is where their journey offers a crucial lesson in research strategy.

Ideally, after validating a core insight (Stage 1), the next step in a factor/effect study is to conduct a critical validation study . Scientifically, this means an experiment designed to rigorously establish the key contribution by providing robust evidence that the primary hypothesis offers a significant advantage over existing or alternative solutions. For this team, this critical validation required demonstrating that their novel text spacing — employing larger-than-usual gaps to distribute text more evenly across the viewport — could significantly outperform traditional methods that present the same amount of text more compactly in a specific location (leaving other viewport areas clear). Validating this central hypothesis in a full study was crucial for the research's viability, as the entire premise hinged on this key hypothesis being valid, even if pilot studies had already provided encouraging indications. However, due to the research team's relative inexperience, this essential step of confirming their key hypothesis first was not carried out until much later.

Here's how their studies actually unfolded:

- Studies 1 & 2 (Mechanism Deep Dive – Conducted First):

- Study 1: Systematically explored a wide array of spacing configurations to understand the landscape of possibilities.

- Study 2: Honed in on the specifics, investigating the interplay between word spacing and line spacing.

These two studies, which explored various sub-factors in detail to provide a more nuanced understanding of the spacing issue, are truly meaningful and should ideally be conducted only after a critical validation study (like Study 3) has first established that the novel spacing strategy actually outperforms traditional methods.

- Study 3 (The Critical Validation — Conducted Last): This was the pivotal experiment. It directly compared their optimized spacing with conventional methods. This study ultimately validated their core contribution, demonstrating the superiority of their approach. However, in retrospect, the team realized this crucial "go/no-go" study should have been their first formal experiment, not their last.

★ Key Lessons: The Price of an Out-of-Order Approach

While the research was ultimately successful, the team's decision to conduct the mechanism studies (1 & 2) before the critical validation study (3) introduced significant inefficiencies and risks:

This out-of-order approach meant they: * Gambled on an Unconfirmed Premise: They invested considerable time and resources exploring the nuances of how their idea worked (Studies 1 & 2) without first robustly confirming if it offered a genuine advantage over existing methods. Had Study 3 ultimately shown their core hypothesis to be flawed or no better than the status quo, much of the preceding effort on Studies 1 and 2 could have been rendered moot or its value significantly diminished. While their core hypothesis fortunately held true, this sequencing placed a large part of their research investment at unnecessary risk. * Forfeited Early Course Correction and Confidence: Prioritizing the critical validation study (Study 3) would have provided an early, crucial go/no-go decision. This would have either quickly validated their core assumption, allowing them to proceed with greater confidence and a solid foundation, or signaled the need to pivot their approach if the results were unfavorable. This early feedback loop would have reduced the underlying uncertainty and prevented deeper investment in potentially unproven mechanisms.

But what if, one might ask, Studies 1 and 2 were actually necessary to help define some of the experimental setups for Study 3? In that case, shouldn't they be conducted first? If such a dependency exists, it's understandable to consider conducting Studies 1 and 2 initially. However, given that the critical validation (Study 3) would still be pending, a more cautious approach is advisable. One could conduct Studies 1 and 2 as pilot studies first. Once the setups are reasonably determined from these pilots, the team should then quickly move to Study 3. Study 3 itself could also be conducted as a pilot study initially to ensure the key hypotheses are not fundamentally flawed. After this crucial early check, one can then revisit and complete the full-scale Studies 1 and 2. In any case, the overarching goal should be to find ways to ascertain the likely outcome of Study 3 as soon as possible to avoid investing heavily in a potentially flawed premise.

The Team's Hard-Won Wisdom for Future Researchers:

Reflecting on their process, the team strongly advocates for a more structured path:

- Validate First, Then Explore: Rigorously test your core insights with robust pilot studies before committing to extensive, resource-intensive formal studies.

- Document Everything: Keep meticulous records of all observations, procedures, and findings, even during the earliest, most informal explorations.

- Prioritize the 'Winning Case': Conduct your critical validation study — the one that tests your core contribution against alternatives — as early as possible in Stage 2. This is your primary go/no-go checkpoint.

- Analyze Pilots Systematically: Don't just rely on gut feelings from pilot data. Apply systematic analysis methods to extract meaningful signals.

- Mechanism Studies Follow Success: Only once you have clear, compelling evidence that your core effect is real and significant should you dive deep into understanding the underlying mechanisms.

The Power of Staging, Even with Detours

Despite the less-than-optimal sequencing, the project still demonstrates the underlying power of a staged thought process:

- Stage 1 (Insight Validation): Their initial pilot work successfully confirmed that the core idea — spacing's effect on dual-task performance — was worth pursuing.

- Stage 2 (Systematic Investigation): Their formal studies, though not ideally ordered, systematically built the theoretical framework and empirical evidence needed to support their claims.

This case study, with its valuable 'what we learned' moments, powerfully illustrates how adhering more closely to a staged approach — validating core insights before deep mechanism dives, and tackling critical 'Winning Case' studies early — can lead to more efficient, less risky, and ultimately more impactful research outcomes. Their journey provides a rich template, and a few cautionary notes, for anyone embarking on similar HCI research projects.

References

Hinckley, K., Guimbretiere, F., Baudisch, P., Sarin, R., Agrawala, M., & Cutrell, E. (2006, April). The springboard: multiple modes in one spring-loaded control. In Proceedings of the SIGCHI conference on Human Factors in computing systems (pp. 181-190).

Zhou, C., Fennedy, K., Tan, F. F. Y., Zhao, S., & Shao, Y. (2023, April). Not All Spacings are Created Equal: The Effect of Text Spacings in On-the-go Reading Using Optical See-Through Head-Mounted Displays. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems (pp. 1-19).